1. Are these spills very common?

Huge blowouts (explosions, followed by fire, occurring when wells are being drilled), occurring in US waters, are uncommon. The last one was the Santa Barbara Union Oil Blowout in 1969 – a little over 40 years ago. The leak lasted 11 days, and the amount of the spill was estimated to be 200,000 gallons (5,000 barrels of oil), so was less than the amount of the current spill. But it was close to shore, and the oil damaged beaches, besides affecting wildlife.

Much more common are oil spills, typically occurring when a ship powered by oil, or a ship carrying oil, collides with another object. The biggest recent oil spill in US waters was the Exxon Valdez oil spill, which occurred in Alaska in 1989. This occurred when an oil tanker ran aground, and spilled 10.9 million gallons (250,000 barrels of oil). If the current spill is 1,000 barrels a day, the Exxon Valdez spill would be the equivalent of the spill continuing for eight months. No one expects the current spill to continue for that long.

An analysis from NOAA shows that there have been many oil spills from ships over the years. Modern double hull oil tankers are not as susceptible to spills, but the many small ships (especially low budget, unlicensed ships) carrying goods of all types can and do run aground, causing smaller spills. The US Coast Guard has regulations to try to prevent problems of this type.

Besides spills, there are naturally occurring underground seeps that allow hydrocarbons to enter the water. In fact, it is these seeps that led to the discoveries of many of the oil deposits found at sea. National Geographic talks about huge underwater asphalt volcanos being discovered off of California, caused by underwater eruptions of hydrocarbons. These eruptions likely caused huge oil slicks.

2. How did the blowout occur?

The earliest oil wells in the Gulf of Mexico were in shallow waters near the coast. But as these wells have become depleted, it has been necessary to drill in ever-deeper waters. When one drills in deeper water, the challenges are greater–the pressures are greater, the temperature of the oil is higher, and the stresses on the metals involved are greater.

The oil industry is creating ever-more technologically advanced equipment to deal with these issues, but the fact remains that it is virtually impossible to solve every new problem that may arise through computer simulations. If one tweaks one part of the equipment to make it stronger (to deal with the higher pressures, and greater temperature differential between the hot oil and the cold water), it can cause unforeseen problems with another system that interacts with it.

Unfortunately, there is an element of trial and error whenever technology attempts to overcome new hurdles. These issues aren’t unique to oil and gas–they are just as much challenges to any new technology, including offshore wind and carbon capture and storage technology. While one would like to move smoothly from one technology to the next, in a short time frame, one really must test equipment in the real world. This means progress tends not to be as fast as one would like: it often is punctuated by setbacks when something that looks like it would work in computer simulations, doesn’t really work, or when some unforeseen combination of events takes place.

We don’t yet know precisely what happened to cause the blowout–there will no doubt be months of investigations. The basic idea of what happened is that Transocean, under contract with BP, was attempting to drill a new well, not too far from existing wells in a deep water area of the Gulf of Mexico. The well was almost complete–in fact, the well seemed to be far enough along that the danger of blowout appeared to be very low. The casing had been cemented, and work was being done on getting a production pipe installed.

Apparently, a pressure surge occurred that could not be controlled. While the equipment includes all kinds of controls and alarms, and a huge 450 ton device called a blowout preventer, somehow it was still not possible to control the hydrocarbon flow. At such high pressures, some of the natural gas separated from the oil within the hydrocarbon stream and ignited causing the explosion.

Some of our readers have provided their ideas as to what might have happened. Rockman has suggested that the strength of the pipes (to withstand the underwater pressure) might have made it impossible for the shear rams in the blowout preventer to slam shut and cut off the pipe, as they were intended to do. Westexas has suggested that perhaps metallurgical failure at such great depths may have contributed to the accident. It is possible that there was some element of human error as well. Without a thorough investigation, it is impossible to know exactly what happened, and even then, there are likely to be gaps in our knowledge.

3. What is being done to stop the leak?

For the last several days, BP has been trying to use sub-sea robots, operating at 5,000 feet below the surface, to engage the blowout preventer and turn off the flow, which seems to amount to about 1,000 barrels (42,000 gallons) per day. With each day that passes, the chance of this working would seem to go down. If the blow out preventer didn’t activate properly originally, and hasn’t engaged during past attempts by robots, why would a new attempt work any better?

There are two alternative approaches BP is using to cutting off the flow. One approach is to drill a second well to intercept the first well, and inject a special heavy fluid to cut off the flow. Workers will then permanently seal the first well. This procedure is expected to take several months.

The other approach is designing and fabricating an underwater collection device (dome) that would trap escaping oil near the sea floor and funnel it for collection. According to NOAA, this approach has been used successfully in shallower water but never at this depth (approximately 5,000 feet). NOAA reports construction of such a dome has already begun.

Until one of these plans works, the approach is to try control the oil that rises to the surface. According to one source:

BP is throwing all the resources it has available at the spill, so the cost to the company may be substantial. It has deployed 32 spill response ships and five aircraft to spray up to 100,000 gallons of chemical dispersant on the slick and skim oil from the surface of the water and deploy floating barriers to trap the oil.

Another approach that is being tried is burning the oil trapped on the surface. This approach would seem to work best when seas are calm.

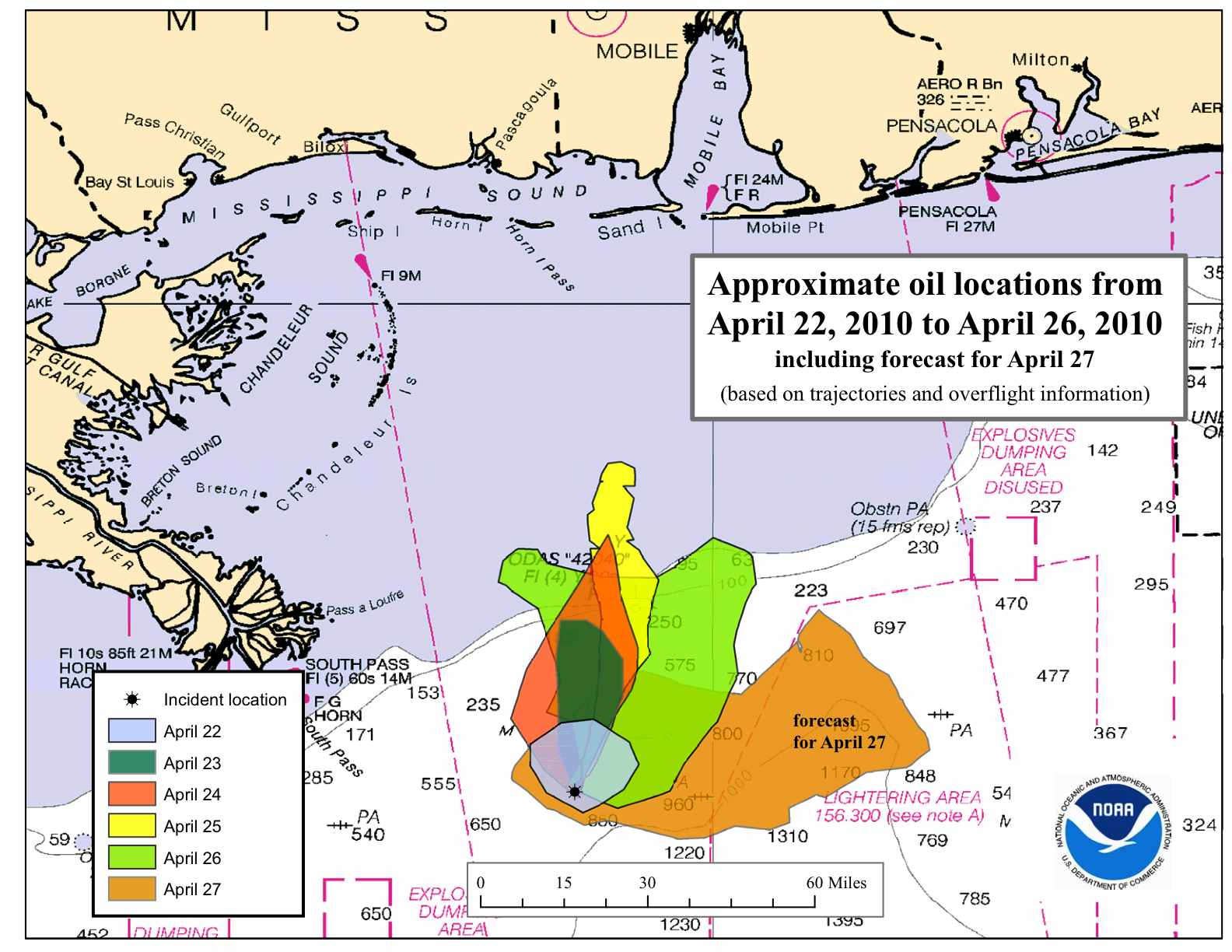

Even with these approaches, there is a significant chance the spill will reach shore, perhaps by this week end. Even if it remains at sea, it can be damaging to marine life.

4. How important are wells such as this one for oil production?

In general, the world is running short of good places to drill for oil. There are a few places where oil still can be extracted inexpensively, but these are becoming fewer and fewer in number. What we seem to have left is expensive hard-to-extract oil, especially in this hemisphere.

In the absence of new deep water wells in the Gulf of Mexico, Gulf oil production would likely be declining.

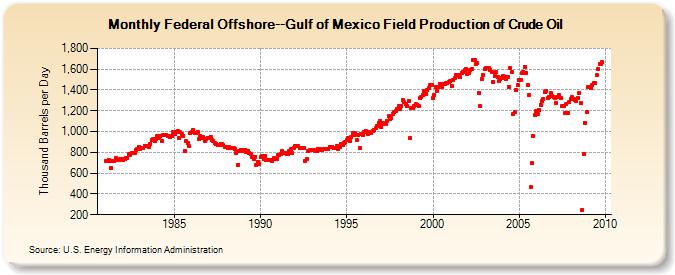

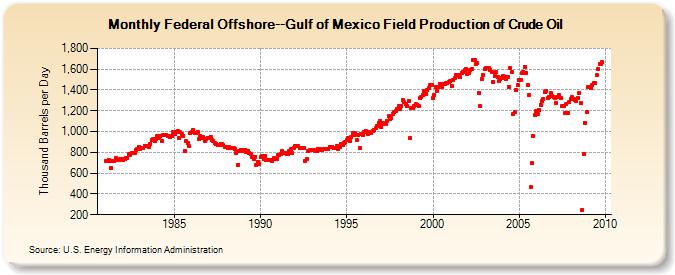

Figure 2. Graph of Gulf of Mexico Production in Federal Offshore Region, produced by the EIA.

It can be seen from Figure 2 that between 2003 and 2008, oil production in this region was declining, but in the last couple of years, as deep water wells have started coming on board, it has begun increasing again. If companies are successful in drilling more deep water wells, oil production in the Gulf of Mexico may grow again, perhaps to 2 or even 2.5 million barrels a day, before resuming its decline. This would not be a huge amount relative to world production of crude oil of 73 million barrels a day, but compared to the US’s crude oil production of 5.4 million barrels a day, this would be a substantial part. In the absence of deep water drilling, Gulf of Mexico production would likely continue to decline, as it did in the 2003 to 2008 period.

Governmental agencies like to talk about “liquids” as if they were all equivalent to crude oil, but they really aren’t. On a “liquids” basis, the US is said to have 9.3 million barrels, including ethanol, and natural gas liquids, and the expansion in volume that occurs when refineries combine US natural gas with crude oil imported from overseas, as part of the refining process. (As mentioned above, crude oil production is only 5.4 million barrels a day.) Deep water oil is generally good quality oil, that can be refined to produce the products our economy needs, where these other products are of lower energy value, and generally lower price. Losing high quality oil would be much more of a blow than losing lower quality products that have been added to the reporting category, to disguise our true shortage of high-quality crude.

The world is at this point struggling with financial difficulties. We like to think that our current order of things, in which we can depend on imports from abroad, will continue indefinitely. But I do not think this will be the case. As more and more countries (Greece, Portugal, and Spain, to start with) struggle with their debts, oil exporters will have less and less willingness to sell oil to those with questionable credit. Many in this country think that the US is immune to this problem, but if it turns out that the US has difficulties as well, we may lose even more true oil than a comparison based on overstated “liquids” would seem to suggest. In that case, we would be very happy to have some home-produced oil, at least for a short time, while it lasts.

5. The natural order of things.

Most of us don’t take time to think about what the true natural order of the world is. If we go back 100,000 years, there were no cars, no superhighways, and no oil wells. There were also very many fewer people, no wind turbines, and no computers. There was no problem with ocean acidification. Fish were abundant in the seas. The world was very different then.

The natural order of things keeps changing, on its own, without our intervention. One type of animal dies out, and another replaces it. Plants undergo natural selection, so as to adapt to changes in the environment.

In the fossil fuel world, we know their have been changes as well, and will continue to be. Where there are not cap rocks on oil supplies, hydrocarbons tend to migrate upward. When they do this, microbes in the atmosphere tend to break them down. Eventually, oil that is not under a tight cap rock tends to disappear–which is why we are having so much difficulty finding oil now.

The oil that escapes as oil spills will also migrate upward to the water surface, just as it does when it migrates upward through oil seeps. In warm, sunny areas, like area around the Gulf of Mexico, hydrocarbons that migrate upward will biodegrade will fairly quickly–within a few years. Some residue may remain for longer. This will be sticky at first, but then turn to asphalt, before it too breaks down.

It seems to me that as world oil supplies deplete, the world will tend back toward what I have described as the natural order of things. We won’t be able to support as many people on the earth. Highways will disappear, as governments no longer have funds to resurface them. Without roads, automobiles will no longer be useful. Cows, and pigs will decline in numbers. Fish will return to the seas. Plant and animal life will change, to fill in the gap we left. We will really have to fight to avoid this natural rearrangement, and even then, we are not likely to be very successful.

We have a large number of people who classify themselves as environmentalists. They have a very different view of the world, and what is important for the long term. One of their concerns is that beaches not be despoiled by what looks like asphalt from oil spills. But these people seem to have little concern about the long stripes of asphalt that are being used for interstate highways. They are very concerned about the tens of thousands of birds that have been killed by oil spills, but they are not concerned (or not very much concerned ) about the billions of fish that are being removed from the oceans by fishermen every year. It seems to me that a major part of their concern is not really for the environment–it is for maintaining business as usual (BAU). Having pretty beaches, now. A nice place for their (many) children. Their plan seems to be for a light green BAU.

6. Where should we be putting our energies now?

If we lived in a world with plenty of energy, my vote would be with the environmentalists. If we don’t really need the oil, why not just close the industry down? No need to worry about asphalt on our beaches, or our fishermen getting big enough catches. If we need more oil, we can just use our large financial surpluses to buy more oil from abroad. With all the energy, we probably wouldn’t need to worry about enough jobs for the US population either.

But if we are headed toward an energy-constrained world, it seems to me that we need to be thinking about our choices more clearly.

Do we really have options for oil that are better? Can we count on world imports? Should we expect Brazil to do real-time experiments, to try to figure out how to extract its deep water oil, and then export it to us? Should we count on the Saudis, with their unaudited reserves and questionable “spare capacity” to keep up their production? Should we expect someone, somewhere, to find four or six new “Saudi Arabias” of additional oil over the next 20 years?

If we can’t depend on imports, do we have more locally produced oil that we can ramp up? From an environmental point of view, would ramping up the oil sands in Canada be better? Or how about oil shale, out in the dry areas of the US West? Would we be willing to devote scarce water supplies in that area to ramping up oil production?

It is easy to say that there should be more rules for the oil and gas industry, but there can be a downside to these rules as well. More rules will delay extraction, and will likely lead to a smaller amount of oil extracted, but at a higher price. There is also the question of whether the rules will really prevent oil spills. If the issue is really that new technology has to be tested live, no amount of rules will really fix the situation. There will always be accidents.

If one is thinking about new rules, one should think about the Sarbanes-Oxley Act. It imposed substantial rules for US public companies after a number of major corporate accounting scandals. But how much good have these rules really been for preventing the financial crisis and aggressive behavior by banks? It seems to me that new rules are usually designed to prevent last year’s problem, not next year’s problem.

If we don’t have oil, would we rather have coal, and a CO2 sequestration site underneath our homes, as technicians test to see whether their computer models are really correct with respect to how well the CO2 will stay underground? (If it doesn’t stay underground, it could form a low lying cloud and smother those in its way.)

There are indirect implications of a loss of oil, too. A fisherman may have more fish to catch, if all oil spills are prevented. But if, in the process, the fisherman doesn’t have enough fuel for his boat, or his customers don’t have jobs and can’t buy the fish, he is not as much better off as he would seem to be.

Given all of the environmental concerns regarding oil and gas, I can understand why many people would decide that the best decision is to err in the direction of caution regarding future oil production. But if this is the route we take (and even if it isn’t), we need to be thinking about where this puts us relative to the natural order of things. Presumably, with less oil, the downslope in the direction of the natural order of things will be even quicker. While some may not object to this, it would seem to me that it would make the urgency of adapting to the new world order even greater than it would be otherwise. This would suggest that we should be putting our efforts into energy sources that are truly renewable with only local materials–small scale wind, run of the stream hydro, and solar of the type that might be used to heat hot water a bit, but not to create electricity. These would not allow us to maintain BAU, or a world very close to BAU.

To me, there are no easy choices.

Facebook created quite a splash with its announcements at the f8 developer conference. The new features are a bold move forward, but plenty of people were a little worried about the power Facebook was seemingly amassing. Facebook has released an overview of the conference and also several interesting numbers. The most interesting: more than 50,000 w… (

Facebook created quite a splash with its announcements at the f8 developer conference. The new features are a bold move forward, but plenty of people were a little worried about the power Facebook was seemingly amassing. Facebook has released an overview of the conference and also several interesting numbers. The most interesting: more than 50,000 w… ( Canada is a big place and, with much of it being pretty remote and inaccessible, mapping it can be an arduous task. Exploring it is even harder, though, which is why plenty of people probably appreciate the effort companies like Google put into providing accurate mapping data. Google is now taking things one step further and has announced t… (

Canada is a big place and, with much of it being pretty remote and inaccessible, mapping it can be an arduous task. Exploring it is even harder, though, which is why plenty of people probably appreciate the effort companies like Google put into providing accurate mapping data. Google is now taking things one step further and has announced t… (

Everyone saw this one coming, Baidu, the leading Chinese search engine, has posted great financial results in the first quarter after Google abandoned its search operations locally and moved all search efforts to its Hong Kong service. Baidu has been growing in terms of revenue for quite a while now, but these latest numbers … (

Everyone saw this one coming, Baidu, the leading Chinese search engine, has posted great financial results in the first quarter after Google abandoned its search operations locally and moved all search efforts to its Hong Kong service. Baidu has been growing in terms of revenue for quite a while now, but these latest numbers … (

Yahoo has had a rough time for the past few years and the company has only recently started showing some optimistic signs. Under CEO Carol Bartz, Yahoo has gotten a lot more focused and has shed off a lot of dead weight. Spurred by great financial results in the first quarter, things are looking up, but there are still questions of whether Yahoo has what… (

Yahoo has had a rough time for the past few years and the company has only recently started showing some optimistic signs. Under CEO Carol Bartz, Yahoo has gotten a lot more focused and has shed off a lot of dead weight. Spurred by great financial results in the first quarter, things are looking up, but there are still questions of whether Yahoo has what… (