By Scott M. Fulton, III, Betanews

It ended up being a somewhat different PDC conference than we had anticipated, and even to a certain extent, than we were led to believe. Maybe this was due in part to a little intentional misdirection to help generate surprise, but in the end, the big stories here in Los Angeles this week were more evolutionary than revolutionary. That was actually quite all right with attendees I spoke with this week, most of whom are just fine with one less thing to turn their worlds upside down. It’s tough enough for many of these good people to hold onto their jobs every week.

It ended up being a somewhat different PDC conference than we had anticipated, and even to a certain extent, than we were led to believe. Maybe this was due in part to a little intentional misdirection to help generate surprise, but in the end, the big stories here in Los Angeles this week were more evolutionary than revolutionary. That was actually quite all right with attendees I spoke with this week, most of whom are just fine with one less thing to turn their worlds upside down. It’s tough enough for many of these good people to hold onto their jobs every week.

We’ll start our conference wrap-up with a look at the flashpoints (remind me to call Score Productions for a jingle to go with that) we talked about at the beginning of the week, and we’ll follow up with the topic that crept in under the radar when we weren’t expecting.

Making up for UAC, or, making Windows 7 seem less like Vista. This was absolutely the theme of “Day 0,” which featured the day-long workshops. At this point, Windows engineers have absolutely no problem with the notion of disowning Vista, disavowing it, even though it was technically a stairstep toward making Windows 7 possible. But it is now perfectly permissible to acknowledge the performance hardships Vista faced, and let go of the past in order to move forward.

Mark Russinovich leads the way in this department, and the fact that he’s appreciated leads others to follow suit. During his annual talk on “Kernel Improvements” — which he expanded this year to a two-parter — Russinovich spoke about the way that the timing of Windows’ response to user interactions was adjusted to give the user more reassurance that something was happening, rather than the sinking suspicion that nothing was happening.

Mark Russinovich leads the way in this department, and the fact that he’s appreciated leads others to follow suit. During his annual talk on “Kernel Improvements” — which he expanded this year to a two-parter — Russinovich spoke about the way that the timing of Windows’ response to user interactions was adjusted to give the user more reassurance that something was happening, rather than the sinking suspicion that nothing was happening.

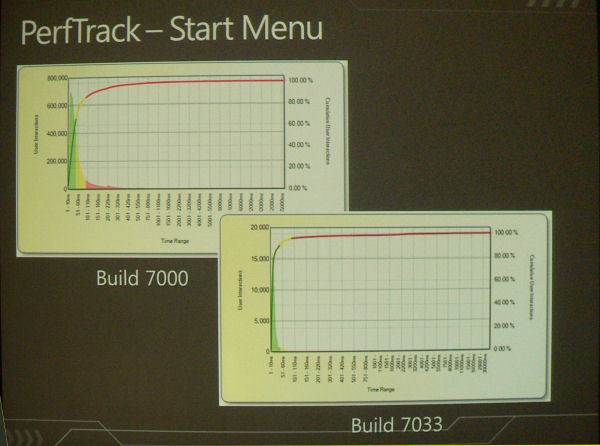

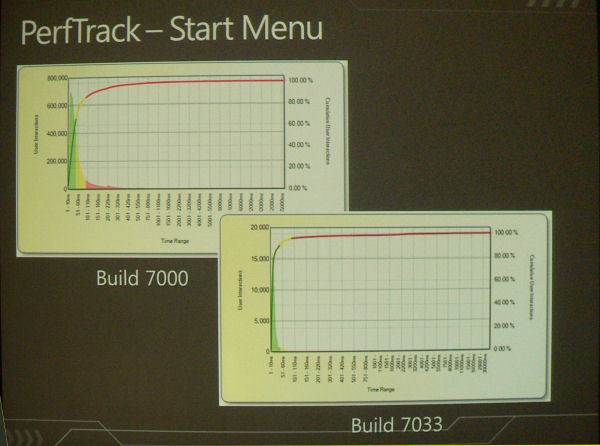

In an explanation of a user telemetry service he helped get off the ground called PerfTrack, he told attendees, “We went through and found roughly 300 places in the system where you interact with something, and there’s a beginning and then an end where you go, ‘Okay, that’s done,’ and optimized the performance of those user-visible interactions. We instrumented those begin-and-ends with data points, which collects timing information and sends that up to a Web service…and for each one of these interactions, we define what’s considered ‘great’ performance, what’s considered ‘okay’ performance, and what’s considered Vista — I mean, uh, ‘bad,’” he explained, with a little grin afterward that appeared borrowed from Jay Leno. “And then if we end up in that ‘okay’ or ‘bad,’ what we do is, selectively turn on more instrumentation using ETW [Event Tracing for Windows] — instrumentation of file accesses, Registry activity, context switches, page faults — and then we collect that information from a sampling of customer machines that are showing that kind of behavior.

“We feed that back to the product teams, they go analyze those and figure out, ‘Why is their component sluggish in those scenarios?’ and optimize that.”

One of the results he demonstrated, shown here in this pair of charts, shows the number of user-reported instances of Start menu lag time leaning more toward the quick side than the slow side of the chart, between two builds of the Windows 7 beta.

The fact that performance matters was one of the key themes of PDC 2009, and attendees greeted that message with enthusiasm — or, maybe more accurately, with appreciation that the company had finally received the message. But there are still lessons to be learned here that can be applied to other product areas, if anybody out there is listening.

Why Windows Azure? The major theme of Day 1 was the ability to scale services up — scaling local services up to the data center, and data center services up (or down, depending on your application) to Microsoft’s cloud provider, Windows Azure.

Last year at this time, Microsoft went to bat with essentially nothing — no real definition of an Azure application, no clear understanding of who the customers will be, and absolutely no clue as to the business model. But now we know that services will be rendered on a utility basis like Amazon EC2, and we have a much clearer concept of the customer groups Azure will address. One is the small business that has never before considered data center applications; another is the class of customer that needs to plan for exceptional capacity traffic during unusual situations, but can’t afford to maintain that high capacity 24/7; and the third is the big customer building a new class of application that has never before been considered on any platform.

Channeling customers to Microsoft’s cloud will be “Dallas,” its code name for large-capacity data bank services typically open for mining by the general public, which should eventually be given a typically Microsoft-sounding name; and AppFabric, the company’s new mix-and-match component applications system built on the IIS 7 platform. But in neither of these cases is Microsoft particularly inventing the wheel; and as I heard from a plurality of attendees this week, Microsoft’s entering another crowded field of contenders (including SalesForce.com and IBM) where competition has already been saturated. Success in this venture is by no means assured.

Next: Office takes a backseat…

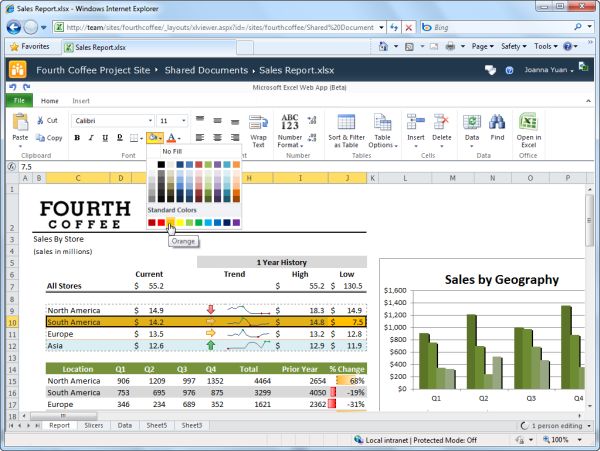

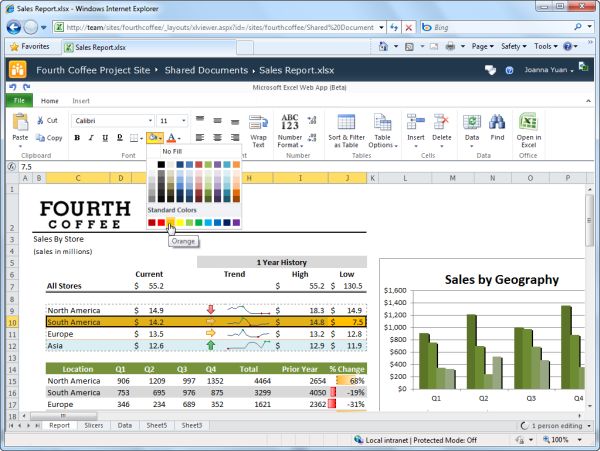

What will Office Web Apps do? Less than we once thought, apparently. The extent to which you can view “rich content” created with real Office applications, in Office Web Apps, apparently remains strong. But since O Web will be free to everyone (for sensible reasons) the ability to create the same depth of rich content online will be artificially limited.

Since many businesses utilize Excel as a type of database, or as a window into their databases elsewhere, this means the utility of that product online will be most restricted. Word may suffer the least, however, as the need to compose respectable looking correspondence from anywhere one happens to be, is a pressing need that Word Web App can easily fulfill.

Making the case for Office 2010. We expected Microsoft Office to be the star of Wednesday’s keynote, with demos of new functionality that, if it wasn’t major, would at least have been advertised as fresh and new. It was not to be. Although we did have an opportunity to speak with an Office product manager (more on that in the coming days), the message Microsoft was sending this year was very different.

In the past, folks used to ask why a consumer applications suite was being prominently featured at a conference geared towards developers. The answer from Microsoft typically was, because Office is a platform, and developers build to platforms. The message Microsoft sent this year was that Office was not a platform. And that’s a problem, because if that’s true, there’s no conference for Office. The excuse for the lack of Windows Mobile news was that it was a topic for MIX, the conference for Web developers set for next spring in Las Vegas.

So does Office wait for TechEd? All of a sudden, this major profit center seems homeless.

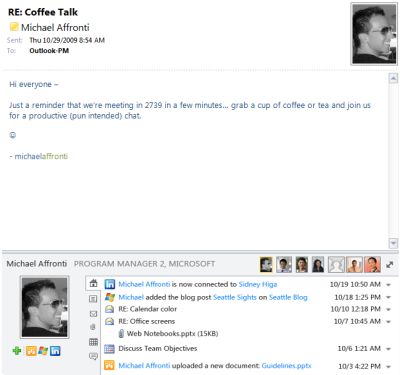

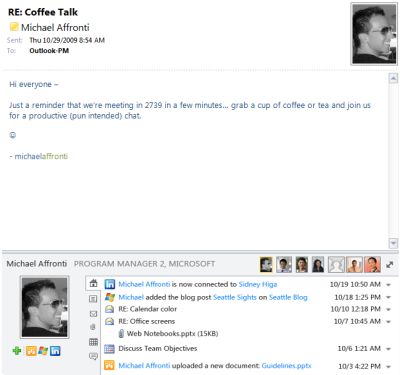

There was a little buzz devoted to something called the Outlook Social Connector plug-in, a new tool for integrating individuals’ social media contacts within Office’s communications app. Deals with social network hosts such as LinkedIn were announced. In one respect, that does address consumer concerns; in another, it’s a little ironic. Here we have a situation where people take the time to broadcast their identities over multiple social services on purposes as a way to spread out…only to discover the need for a kind of “identity vacuum” to pull them back in again to one cohesive chord.

There was a little buzz devoted to something called the Outlook Social Connector plug-in, a new tool for integrating individuals’ social media contacts within Office’s communications app. Deals with social network hosts such as LinkedIn were announced. In one respect, that does address consumer concerns; in another, it’s a little ironic. Here we have a situation where people take the time to broadcast their identities over multiple social services on purposes as a way to spread out…only to discover the need for a kind of “identity vacuum” to pull them back in again to one cohesive chord.

What we did see from the Office 2010 public beta (released Monday, then released Tuesday, then “launched” Wednesday) let us know that if Microsoft truly is listening to its customers and acting on their telemetry, then the word they’re saying most often must be, “Whoa!”

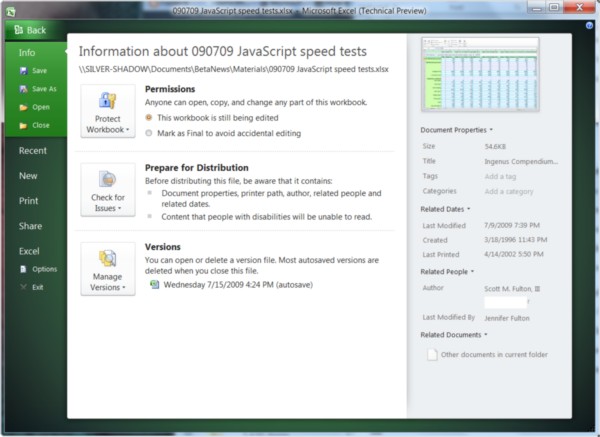

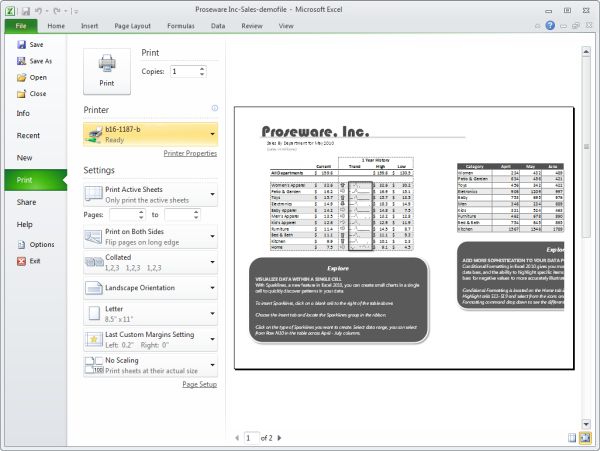

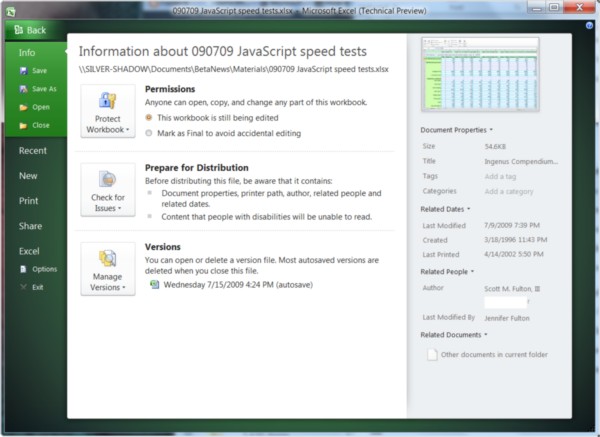

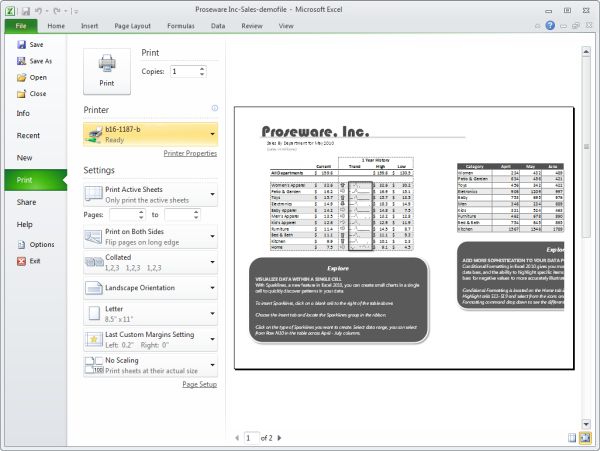

During the Technical Preview phase, Microsoft unveiled its BackStage concept — a way of organizing all the preparatory content of an application, such as print preview and preferences, in a more dimly-lit, cooler arrangement, making you almost want to whisper when you talk about it. The screenshot above shows BackStage in the Excel 2010 Technical Preview.

This is the same BackStage in Excel 2010 Beta 1. It’s more conservative in several obvious regards, including the staging. But notice also something very important: The “Office button,” which premiered in Office 2007 and which flattened down to become an icon menu tab in the Tech Preview, has now returned to being the File menu. If customers have been asking, “Where’s File/Save?” then you have to wonder when they started asking, and how long they’ve been at it.

The new flavor of Visual Studio is already the old flavor. When you’re dealing with a development platform unto itself, the beta version is often, unofficially but certainly, the working edition for many developers. And VS 2010 is already on Beta 2 now. More than one session presenter this week asked for shows of hands as to how many folks were already using Beta 2 as their development platform — and in each case, a majority of everyone’s hands were raised.

Will virtualization envelop Windows? Hell if I know. One of the hottest topics of prior conferences was something of a dud this year, and that’s not good for a company that is actually behind in its ability to virtualize 64-bit platforms on 32-bit systems — a feature Sun’s VirtualBox and VMware already provide. But once the problem of absence of live migration in Hyper-V was kicked, virtualization took something of a breather this year, though it wasn’t off the radar altogether.

The push toward online identity. Indeed, this ended up being the wildcard topic of the show. The principal security and architectural problem faced not only by developers but administrators as well, is enabling a secure single sign-on platform for local and remote applications. With multiple vendors supporting even more authentication protocols than there are vendors — or so it appears — this goal would seem impossible to achieve.

Microsoft is working to address this in its upcoming Windows Identity Foundation library, which will require the push of Active Directory Federation Services 2.0 — a way to get AD out there to servers that aren’t Windows. But just getting all hosted apps vendors on-board with AD is a colossal task, made more difficult by a “competitive” spirit among application and security vendors that works against the very spirit of communication and federation they need to accomplish the goal of common identity. We will be talking more about this in the coming days, because we learned a lot about this from PDC.

Now, there’s something I’m missing. Yes, Scott Guthrie, I know I missed you in my list of headliners…and I’m sorry, it was inadvertent, and I apologize. Though I do know Brian Goldfarb gave you heck about it. But there’s something else, let’s see, I’m trying to recall…help me out, Brian…

Oh thank you, Scott, much obliged. Silverlight 4. This one should have been on our radar for certain. Silverlight stole the show on Wednesday, and was much of the talk among developers on Thursday. The new version will provide 1080p video, which everyone wanted. And it will provide authenticated access to system services outside the sandbox, which everyone wants.

Oh thank you, Scott, much obliged. Silverlight 4. This one should have been on our radar for certain. Silverlight stole the show on Wednesday, and was much of the talk among developers on Thursday. The new version will provide 1080p video, which everyone wanted. And it will provide authenticated access to system services outside the sandbox, which everyone wants.

If Office Web Apps were to run on Silverlight 4, you would get access to the right-click context menu — a critical feature of regular Office 2007 and Office 2010 that’s difficult to make up for with the ribbon alone. S4’s access to system devices will make it feasible for developers to craft iTunes-like smartphone applications for devices that are tethered to PCs…and maybe even devices running on smartphones themselves, and not just Windows Phones.

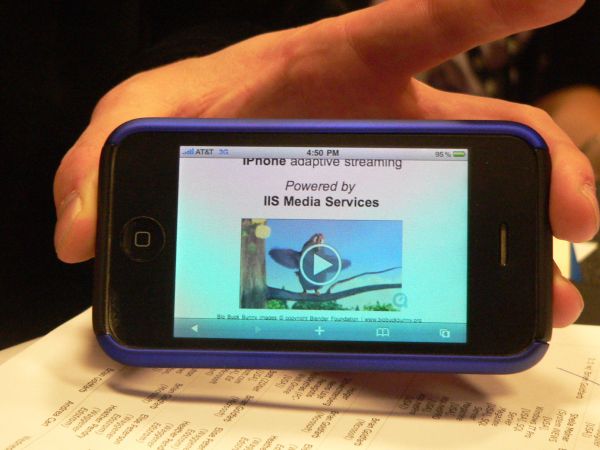

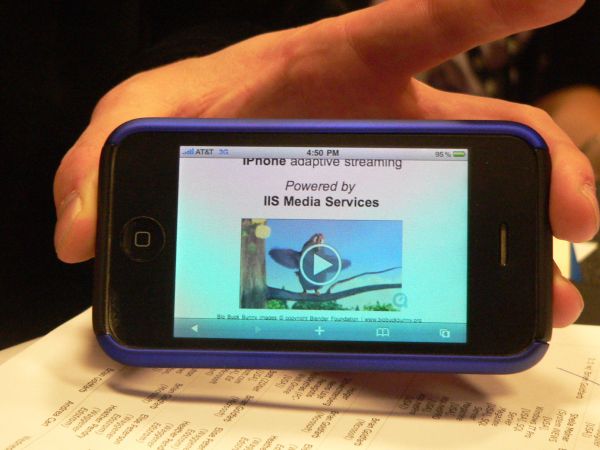

Which reminds me, there was that one Guthrie demo Wednesday that bit the bottom of the bit bucket, with that cool looking phone. Did anyone ever make that work…Brian Goldfarb to the rescue once again. Yes, it is indeed possible to perform adaptive streaming of movies to the iPhone using Silverlight. We talked at length with Goldfarb (more on that too in coming days), and here’s a preview of coming attractions:

Which reminds me, there was that one Guthrie demo Wednesday that bit the bottom of the bit bucket, with that cool looking phone. Did anyone ever make that work…Brian Goldfarb to the rescue once again. Yes, it is indeed possible to perform adaptive streaming of movies to the iPhone using Silverlight. We talked at length with Goldfarb (more on that too in coming days), and here’s a preview of coming attractions:

“We’ve worked with Apple to create a server-side-based solution with IIS Media Services; and what we’re doing is taking content that’s encoded for smooth streaming and enabling the content owner to say, ‘I want to enable the iPhone.’”

It was certainly more of an evolutionary than a revolutionary tone at this year’s PDC, but attendees seemed comfortable with that this time around. Here was one strange phenomenon we’ve never noticed before: Attendance increased with later days. Wednesday attendance was noticeably higher for sessions and the keynote than for the previous day, and that was despite news of the big laptop giveaway being kept under lock and key. And Thursday — which has often been a day for “leftovers” — ended up being packed as well, including with attendees who brought those shiny new Acer multitouch laptops with them.

It was certainly more of an evolutionary than a revolutionary tone at this year’s PDC, but attendees seemed comfortable with that this time around. Here was one strange phenomenon we’ve never noticed before: Attendance increased with later days. Wednesday attendance was noticeably higher for sessions and the keynote than for the previous day, and that was despite news of the big laptop giveaway being kept under lock and key. And Thursday — which has often been a day for “leftovers” — ended up being packed as well, including with attendees who brought those shiny new Acer multitouch laptops with them.

Now, there’s something that hasn’t been touched on: Acer. Think about that for a moment. This is the same company that publicly dissed Vista in 2006 for being a non-event for consumers, practically leading the wave for the complaints that were to follow. And here it is lending its name to an event that not only promotes Windows 7, but prototypes its proper use (from Microsoft’s perspective) in all computing. Microsoft let Acer show everyone else how quick bootup and clean performance are supposed to be done.

That’s the biggest indicator of Lessons Learned we saw all week.

Copyright Betanews, Inc. 2009

Jaime, instead of taking my word for it, went out and talked to a whole lot of our users — nearly 1,000 of you shared your feedback and insights with us. And you were not shy about your dislikes. As it turned out, most of what you wanted was already on my wish list. So we got ahold of our old friend

Jaime, instead of taking my word for it, went out and talked to a whole lot of our users — nearly 1,000 of you shared your feedback and insights with us. And you were not shy about your dislikes. As it turned out, most of what you wanted was already on my wish list. So we got ahold of our old friend

In my first week back on the web beat at GigaOM, one of the topics I wanted to focus on was location. Let’s just say that

In my first week back on the web beat at GigaOM, one of the topics I wanted to focus on was location. Let’s just say that

It ended up being a somewhat different PDC conference than we had anticipated, and even to a certain extent, than we were led to believe. Maybe this was due in part to a little intentional misdirection to help generate surprise, but in the end, the big stories here in Los Angeles this week were more evolutionary than revolutionary. That was actually quite all right with attendees I spoke with this week, most of whom are just fine with one less thing to turn their worlds upside down. It’s tough enough for many of these good people to hold onto their jobs every week.

It ended up being a somewhat different PDC conference than we had anticipated, and even to a certain extent, than we were led to believe. Maybe this was due in part to a little intentional misdirection to help generate surprise, but in the end, the big stories here in Los Angeles this week were more evolutionary than revolutionary. That was actually quite all right with attendees I spoke with this week, most of whom are just fine with one less thing to turn their worlds upside down. It’s tough enough for many of these good people to hold onto their jobs every week. Mark Russinovich leads the way in this department, and the fact that he’s appreciated leads others to follow suit. During his annual talk on “Kernel Improvements” — which he expanded this year to a two-parter — Russinovich spoke about the way that the timing of Windows’ response to user interactions was adjusted to give the user more reassurance that something was happening, rather than the sinking suspicion that nothing was happening.

Mark Russinovich leads the way in this department, and the fact that he’s appreciated leads others to follow suit. During his annual talk on “Kernel Improvements” — which he expanded this year to a two-parter — Russinovich spoke about the way that the timing of Windows’ response to user interactions was adjusted to give the user more reassurance that something was happening, rather than the sinking suspicion that nothing was happening.

There was a little buzz devoted to something called the Outlook Social Connector plug-in, a new tool for integrating individuals’ social media contacts within Office’s communications app. Deals with social network hosts such as LinkedIn were announced. In one respect, that does address consumer concerns; in another, it’s a little ironic. Here we have a situation where people take the time to broadcast their identities over multiple social services on purposes as a way to spread out…only to discover the need for a kind of “identity vacuum” to pull them back in again to one cohesive chord.

There was a little buzz devoted to something called the Outlook Social Connector plug-in, a new tool for integrating individuals’ social media contacts within Office’s communications app. Deals with social network hosts such as LinkedIn were announced. In one respect, that does address consumer concerns; in another, it’s a little ironic. Here we have a situation where people take the time to broadcast their identities over multiple social services on purposes as a way to spread out…only to discover the need for a kind of “identity vacuum” to pull them back in again to one cohesive chord.

Oh thank you, Scott, much obliged. Silverlight 4. This one should have been on our radar for certain. Silverlight stole the show on Wednesday, and was much of the talk among developers on Thursday. The new version will provide 1080p video, which everyone wanted. And it will provide authenticated access to system services outside the sandbox, which everyone wants.

Oh thank you, Scott, much obliged. Silverlight 4. This one should have been on our radar for certain. Silverlight stole the show on Wednesday, and was much of the talk among developers on Thursday. The new version will provide 1080p video, which everyone wanted. And it will provide authenticated access to system services outside the sandbox, which everyone wants. Which reminds me, there was that one Guthrie demo Wednesday that bit the bottom of the bit bucket, with that cool looking phone. Did anyone ever make that work…Brian Goldfarb to the rescue once again. Yes, it is indeed possible to perform adaptive streaming of movies to the iPhone using Silverlight. We talked at length with Goldfarb (more on that too in coming days), and here’s a preview of coming attractions:

Which reminds me, there was that one Guthrie demo Wednesday that bit the bottom of the bit bucket, with that cool looking phone. Did anyone ever make that work…Brian Goldfarb to the rescue once again. Yes, it is indeed possible to perform adaptive streaming of movies to the iPhone using Silverlight. We talked at length with Goldfarb (more on that too in coming days), and here’s a preview of coming attractions:

It was certainly more of an evolutionary than a revolutionary tone at this year’s PDC, but attendees seemed comfortable with that this time around. Here was one strange phenomenon we’ve never noticed before: Attendance increased with later days. Wednesday attendance was noticeably higher for sessions and the keynote than for the previous day, and that was despite news of the big laptop giveaway being kept under lock and key. And Thursday — which has often been a day for “leftovers” — ended up being packed as well, including with attendees who brought those shiny new Acer multitouch laptops with them.

It was certainly more of an evolutionary than a revolutionary tone at this year’s PDC, but attendees seemed comfortable with that this time around. Here was one strange phenomenon we’ve never noticed before: Attendance increased with later days. Wednesday attendance was noticeably higher for sessions and the keynote than for the previous day, and that was despite news of the big laptop giveaway being kept under lock and key. And Thursday — which has often been a day for “leftovers” — ended up being packed as well, including with attendees who brought those shiny new Acer multitouch laptops with them.