I’m spending this week at IEEE ICASSP 2010 in Dallas. ICASSP stands for “International Conference on Acoustics, Speech and Signal Processing”, and it’s one of those enormous meetings with a couple of thousand attendees. This one has more than 120 sessions, with presentations on topics ranging from “Pareto-Optimal Solutions of Nash Bargaining Resource Allocation Games with Spectral Mask and Total Power Constraints” to “Matching Canvas Weave Patterns from Processing X-Ray Images of Master Paintings”.

The large number of parallel sessions make it impossible for any one person to attend more than a tiny fraction of the interesting papers, but luckily, ICASSP is one of the (many) conferences that now require would-be presenters to submit a paper (in this case, 4 pages), not just a short abstract. All accepted papers are then published on a CD given to attendees.

This practice is an enormous step up, in all respects, from the old-fashioned idea of submitting an abstract of a couple of hundred words. It makes it possible for the program committee to referee submissions on a rational basis. What is even more important, it means that the conference proceedings become a crucial mode of technical communication. In many subfields, such conference papers (typically made available for free in reprint archives or on authors’ home pages) now play a more important role than journal publication does.

[I wish that the LSA would totter into the 1980s and join this trend…]

I’ll illustrate the value of such conference papers with one example that I stumbled across yesterday. If I have time, I’ll blog about some others later on.

The specific ICASSP paper that I’m talking about is Athanasios Tsanas et al., “Enhanced Classical Dysphonia Measures and Sparse Regression for Telemonitoring of Parkinson’s Disease Progression“. Their abstract:

Dysphonia measures are signal processing algorithms that offer an objective method for characterizing voice disorders from recorded speech signals. In this paper, we study disordered voices of people with Parkinson’s disease (PD). Here, we demonstrate that a simple logarithmic transformation of these dysphonia measures can significantly enhance their potential for identifying subtle changes in PD symptoms. The superiority of the log-transformed measures is reflected in feature selection results using Bayesian Least Absolute Shrinkage and Selection Operator (LASSO) linear regression. We demonstrate the effectiveness of this enhancement in the emerging application of automated characterization of PD symptom progression from voice signals, rated on the Unified Parkinson’s Disease Rating Scale (UPDRS), the gold standard clinical metric for PD. Using least squares regression, we show that UPDRS can be accurately predicted to within six points of the clinicians’ observations.

That “within six points” should be interpreted with respect to the overall range of the metric (which is 0-176 for the total UPDRS, and 0-108 for the motor portion of the scale), and also with respect to the distribution of values among the 42 subjects in this study. This last distribution is not clearly specified in the paper, but we do learn that

The 42 subjects (28 males) had an age range (mean ± std) 64.4 ± 9.24 years, average motor-UPDRS 20.84 ± 8.82 points and average total UPDRS 28.44 ± 11.52 points.

The dysphonia measures used were based on subjects’ attempts to produce extended steady-state vowel sounds, and included various variations on standard phonetic measurements of jitter (local perturbation in pitch), shimmer (local perturbations of amplitude) , and harmonics-to-noise ratio (breathiness).

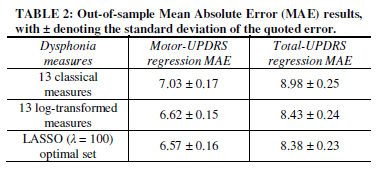

As this table suggests, the core result was simply that these dysphonia measures predicted the clinician’s numerical judgments within about seven to nine points.

The paper’s technical innovations (log transform of the jitter and shimmer measures, and subsequent feature selection) improved this baseline performance by about 7%. (Let me note in passing that the authors use ten-fold cross-validation and other appropriate techniques in making these estimates. This is worth noting, since researchers in disciplines like psychology and sociology sometimes report regression residuals or similar results without doing this, thus in effect testing on their training material and offering an inflated idea of the quality of their predictions.)

I don’t know enough about the distribution of UPDRS values in the subject pool, or the typical repeatability of clinicians’ UPDRS assignments to individual patients, to evaluate this description of the residuals. And I don’t know enough about the clinical management of Parkinson’s patients to evaluate how useful this level of prediction would be as a monitoring tool, by itself or in combination with other inputs. But to my eye — that of someone who understands the speech science and the basic statistical issues reasonably well, and has a superficial understanding of the medical issues — this looks promising.

More generally, I’m convinced that it’s possible to quantify many speech- and language-based diagnostic indicators,with sensitivity, specificity, and repeatability that compare favorably with many of the physiological tests in common use today. And these linguistic tests are totally non-invasive, require no apparatus other than a microphone and a computer, and in many cases can be administered remotely.

Considering the possible benefits, there’s remarkably little research of this kind, overall, and so I was happy to find a specific example of such high quality.

(Note that I could get this far in learning about this conference presentation because it exists as a coherent document, published in the conference proceedings and also on the authors’ web site. If ICASSP were run like the LSA meeting, with a 200-word abstract being the only concrete record of a presentation, I wouldn’t be writing this. And even if the live presentation (in this case a poster) had caught my eye, I might never have remembered to follow up on it.)

[Update 3/21/2010: — In reference to this post, Athanasios Tsanas writes:

Your concerns there are right: the distribution of the motor-UPDRS and total-UPDRS scores are not mentioned (roughly these are Gaussian shape like, with a peak almost in the middle of the range of values). Clinically, I got the impression that medics are satisfied with UPDRS estimates within about 5 points of their evaluations – which is the inter-rater variability, i.e. the UPDRS difference that might occur in the evaluation of patients between two trained clinicians. In fact, my latest results suggest we can do considerably better than that, further endorsing the argument of using speech signals to track average Parkinson’s disease symptom severity. These new results are derived by using some novel nonlinear speech signal processing algorithms which complement the already established measures, and we are submitting a paper describing all that soon.

]