As a Consumer Reports subscriber, I received an email asking for my input on vehicles. Here’s what Consumer Reports asked and how this particular survey might come up short.

Can You Say “Complicated”?

Surveying people with accuracy is difficult. First of all, many questions are open to interpretation. Second, the method for collecting answers is often imperfect. Finally, not every survey response offers the same amount of diligence and effort.

NOTE: If you need proof that surveys are problematic, think about all those political survey results we hear…more than half the time, these scientific surveys are patently incorrect.

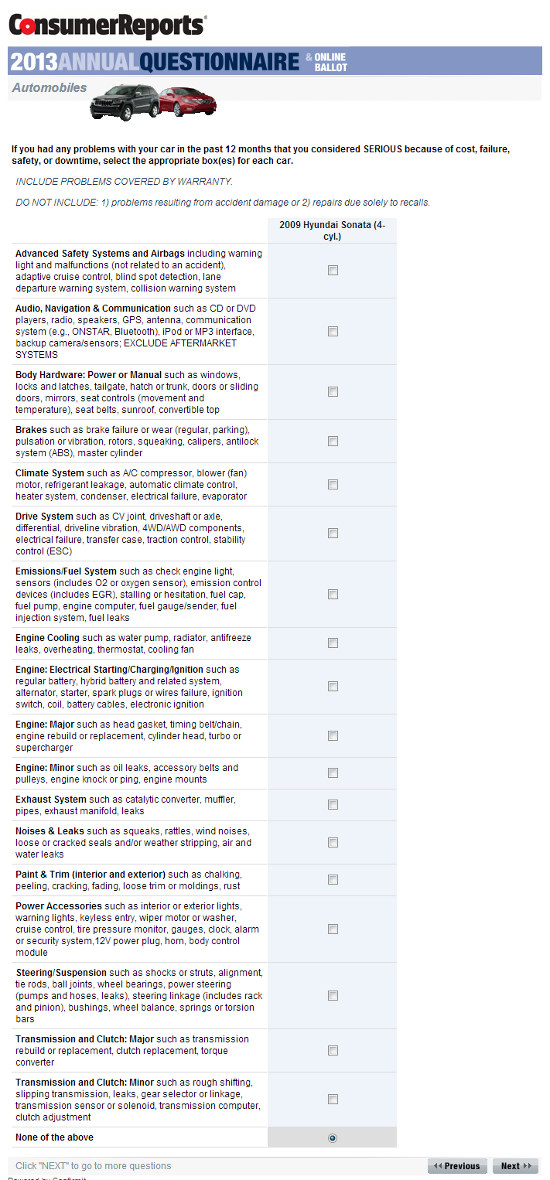

In my opinion, the biggest flaw in the survey I received from Consumer Reports is that it’s too damn complicated. Take a look at this survey page (click for a larger view):

Here’s a nice long list of serious problems that Consumer Reports wants to know about. How many people read this list completely and carefully?

Did you read each and every line of that survey? Me neither. Quite frankly, I can’t imagine that most people read this list carefully. Instead, they problem skim the headings like “steering/suspension,” and then click if/when they think they’ve had an applicable issue. I have three problems with this:

1) Some of the headings – like “Drive System” – are incredibly ambiguous. If I asked a random man or woman on the street about problems with their vehicle’s “drive system,” who knows what kind of response I might get. Granted, the survey offers some examples, but there are a few sub-headings here that just aren’t descriptive enough.

2) This detailed list requires the respondent to make some fairly nuanced distinctions. For example: Let’s say your vehicle had a manual transmission with a grinding noise caused by worn synchros. Which category would you mark this down under?

- Transmission and Clutch: Major?

- Transmission and Clutch: Minor?

- Noises and Leaks?

- Drive System?

If you review things carefully, you’d probably pick the second item on my list. OR, you might get annoyed, close the survey, and move on…which brings me to my third problem.

3) This survey requires a fair amount of effort on behalf of the respondent. Therefore, you’re more likely to get responses from people who are either really angry, really happy, and or really excited about contributing to Consumer Reports.

For all these reasons, I’d argue the results from the Consumer Reports surveys are anything but definitive.

Is the Consumer Reports Survey Worthless?

Of course not. Imperfect survey data is better than no survey data, and while the length, detail, and ambiguity of the survey undoubtedly leads to erroneous conclusions, the survey data is likely good enough to spot trends.

If (for example) very few Lexus owners report problems compared to BMW owners, it’s likely that Lexus is more reliable than BMW…especially if this trend holds for a period of years. Likewise, if a greater percentage of Chrysler owners report problems compared to the average, it’s likely that Chrysler products are below-average in terms of quality.

Yet it would be foolhardy to trust Consumer Reports data exclusively. In fact, the best approach would be to consult data from Consumer Reports alongside data from JD Power, reviews from trusted entities like Edmunds.com, and of course market data (resale value), which is arguably the economic expression of a vehicle’s quality and reliability relative to competing models. Looking at historical trends would also be wise, at least in the case of JD Power and Consumer Reports.

The point? Consumer Reports survey data is imperfect. Don’t buy a car just because Consumer Reports says so.

The post Consumer Reports Annual Car Survey – What It Looks Like appeared first on Tundra Headquarters Blog.