Panic in Cupertino: Headless chickens run around smacking into one another, because they don’t know they’re dead.

That’s the fundamental problem with Apple, and this situation is largely independent of recent stock price declines that analysts, bloggers, reporters and other writers can’t opine enough about. Falling shares are part of a necessary correction, as reality displaces perception. To understand what’s happening now, you need to look into the past — three years, which by Internet counting is like a lifetime.

Three years. I want you to repeat “three years” like a mantra while reading this analysis. That’s all it took for Apple’s recent rise and about all it could take for the fall. The company isn’t going away and surely will remain successful for a long time — just more as a niche brand, as it was before. That is, unless CEO Tim Cook and company do something dramatic, like apply the “David Thinking” that spurred success in the past, while giving up status quo approach for the future (that’s something I don’t expect).

History Lesson

In September 2009, the Financial Accounting Standards Board changed reporting rules that greatly benefitted Apple. Beforehand, the company deferred a portion of iPhone revenue over 24 months, rather than put it all on the books at once. Reasoning: iPhone buyers commit to two-year contracts for subsidized pricing (they pay less than devices cost) and Apple delivers iOS updates over time. The new rules let Apple realize revenue immediately, and the company adopted the change with fiscal 2010 first quarter results. Three years ago this month.

The change was dramatic. Apple beat analyst revenue consensus by more than $3.5 billion, reaching $15.6 billion. The company didn’t stop there, but revised earnings reports going back two years, essentially raising revenue after the fact. “Not since the Soviet Union, have I seen any entity so brazenly try to rewrite history”, I accused. There was blatant (although not illegal) manipulation in the restatement, and its breadth and suddenness.

The past revision was huge. For example, for fiscal fourth quarter 2008, Apple reported $7.9 billion revenue and net profits of $1.14 billion. The company shipped 6.9 million iPhones, but only reported revenue of $806 million. The revised figures raised revenue to $11.52 billion and net profit to $2.25 billion. The difference: $3.62 billion revenue and $1.11 billion net income. Apple didn’t report the revised results in those past quarters. You can change the historical record, but not the past or decisions Apple investors made three months or eight quarters earlier.

The accounting change, along with iPad and iPhone 4 sales success, hugely lifted Apple revenue and profit in 2010. So much that in April 2011, I posted a chart showing the gains. During calendar 2010, Apple revenue rose from $13.5 billion in first quarter to $26.7 billion in the last and profit from $3.1 billion to $6 billion. So, both nearly doubled. iOS revenue more than doubled — and then some — to $15.9 billion from $6.4 billion.

Margin Call

Since, Apple is nothing short of an amazing money making machine, and that’s more than about accounting changes. For calendar 2012, revenue reached $164.7 billion. The difference ($88.46 billion) is more than Apple total revenue for calendar 2010 ($76.24 billion). Profit more than doubled, from $16.7 billion to $39.58 billion. If things are so good now, why is the share price so bad?

Apple’s problem isn’t past or present, but the future and whether its decline will be as fast as the meteoric rise. Three years. The stock chart above shows steady overall climb, beginning in early 2009 through November 2011, when a steep ascent started. Apple shares soared 94 percent, before reaching a record high in September 2012. As Apple shares rose, analysts, bloggers, reporters and other writers chattered about the stock reaching $1,000 (all time high was $705.07) and market cap reaching 1 trillion. I looked askance as usual, but, that’s just me. So given the hype, Apple’s decline since is quite shocking. At close of market today, shares are down 36 percent.

While shares fall, revenues rise. Except Apple missed Wall Street consensus two quarters in a row, which causes some people to be nutty about the stock. Meanwhile, during calendar fourth quarter, gross margin plummeted to 38.6 percent from 44.7 percent a year earlier and 40 percent from Q3. The company forecasts gross margin between 37.5 percent and 38.5 percent for calendar first quarter.

iPad already tugs margins downward, as iPad mini cannibalizes larger slate sales. By my math, iPad category average selling prices fell 12.3 percent quarter on quarter — from $535 to $467. Year over year, iPad ASPs are down $101, Apple’s CFO admits. Only the company’s inability to manufacture enough minis to meet demand kept matters from being worse. “Our iPad units grew faster than our iPad revenue in the December quarter”, he says. “We would expect iPad ASPs to be down quite a bit in the March quarter on a year-over-year basis for the same reasons”.

Feel the Pinch

Something else risks margins. In November 2011 analysis “Apple is the new Dell“, I recounted how real-time manufacturing gave the Windows PC maker supply chain advantage over competitors. Apple has accomplished similar feat by using economies of scale and sheer influence to lock in lucrative component price deals that lock out competitor access.

As I explained then about these competitors: “They’ll adapt and improve Apple’s supply-chain recipe, just like Dell’s PC competitors did a decade ago. As significant, as more manufacturing capacity comes online, Apple won’t as easily get the best prices or, by monopolizing supply, shut out competitors producing smartphones, tablets and other connected mobile devices. As components become more readily available and for lower costs, competitors can improve margins and still lower selling prices against products like iPhone and iPad”. That situation absolutely is underway right now.

But there’s something more: All the bad buzz about Apple fosters perceived weakness that suppliers and other partners will exploit. You must understand market retribution dynamics. The powerful are often punished when weakened. Microsoft and Nokia are examples. As Apple’s reputation and share price fall, so will its muscle. Apple’s success makes it unpopular in many quarters, and nowhere more than among partners that felt gave away more than than wanted or received less of the gravy than they feel deserved. The situation makes way for competitors like Samsung, which already is a major component supplier, to lock in some good deals of its own.

All this happens as Apple’s most important, iPhone, faces increased risk. During calendar fourth quarter, iOS smartphone share fell to 22 percent from 23.6 percent a year earlier, according to Strategy Analytics. Meanwhile, Android rose to 70.1 percent from 51.3 percent. For all 2012, iOS nudged up to 19.4 percent from 19 percent share, while Android reached 68.4 percent, up from 48.7 percent. The differences between the quarter and year, strongly suggest sales surge at the end, for Android, which forebodes poorly for Apple when iOS got big lift from iPhone 5’s recent launch.

During calendar Q4 iPhone revenue rose to $30.67 billion from $16.25 billion three months earlier. The device accounted for 56.3 percent of all Apple revenue and 52.7 percent a year earlier. Any risk to iPhone hurts the whole company.

What’s a Year?

All this leads me back to the three years theme and how quickly a company’s fortunes can change and how fast the collective human brain is to forget. Hell, not even three years but one. Or less. As previously mentioned, in 10 months Apple shares rose 94 percent (leading to predictions about how high they could go) only to fall nearly 40 percent in another five (leading to speculation how low the stock will fall).

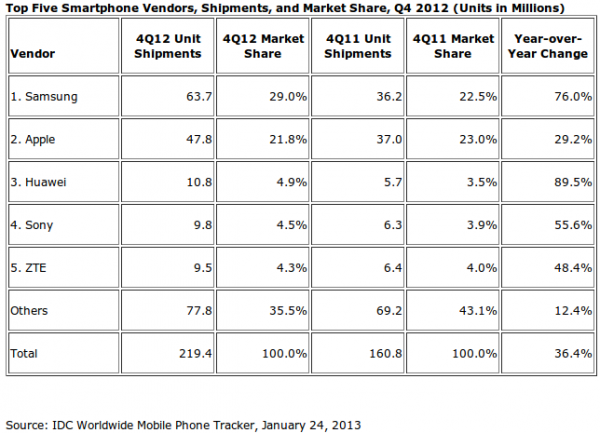

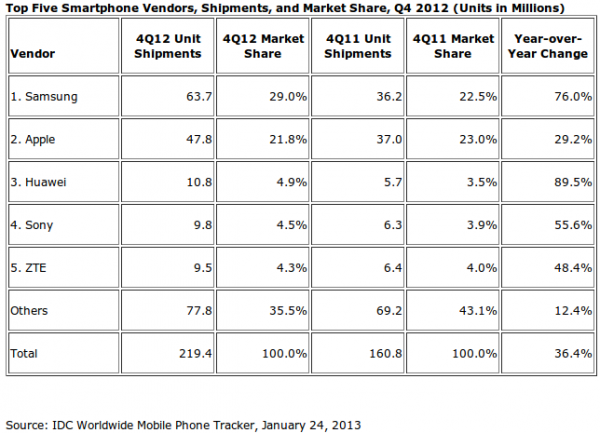

Apple rival Samsung is startling example, too. In fourth quarter 2011, Apple actually shipped more smartphones than Samsung — 23 million and 22.5 million, respectively, according to IDC. A year later, Samsung shipments rose 76 percent, with 63.7 million smartphones to Apple’s 47.8 million.

But Samsung claimed glory sooner, in Q1 2012, beating out Nokia in global handset shipments and Apple in smartphones, according to Strategy Analytics. The South Korean manufacturer’s rise was fast, ah hum, like Apple’s. In just 10 quarters, Samsung went from a bottom-feeding 5 percent smartphone share to top-dog 29 percent.

Nokia invented the smartphone in the mid-1990s and was the undisputed global handset leader for years, even after Apple released iPhone. But in just three years, the Finnish-phone maker’s fortunes collapsed. Smartphone share in Q4, according to strategy analytics: 3 percent. Three years earlier: 39.2 percent. Many of the markets Nokia dominated three years ago belong mostly to Samsung and somewhat to Apple.

Research in Motion is another example, with smartphone share ahead of Apple in Q4 2009 — 20.2 percent to 16.4 percent, respectively. Three years later, Strategy Analytics lumps RIM with Other.

We forget how fast fortunes are made or lost. Apple’s rise is a three-year story, and it’s fall can be just as fast — as impossible as such decline might seem to many. Cloud-connected devices form a highly complex and dynamic market. Anything can happen. Apple won’t go down easy, but I promise that there will be a pileup of competitors, and even partners, seeking to do just that.

Photo Credit: Joe Wilcox