Late this afternoon, Microsoft answered a question oft-asked by investors this month: What’s up with Windows 8? The new operating system, which launched October 26, was supposed to lift sagging PC sales and demonstrate the capability to successfully compete with so-called post-PC platforms like Android and iOS. Now we know more. Windows & Windows Live revenue passed Business, making the OS division most-valuable again.

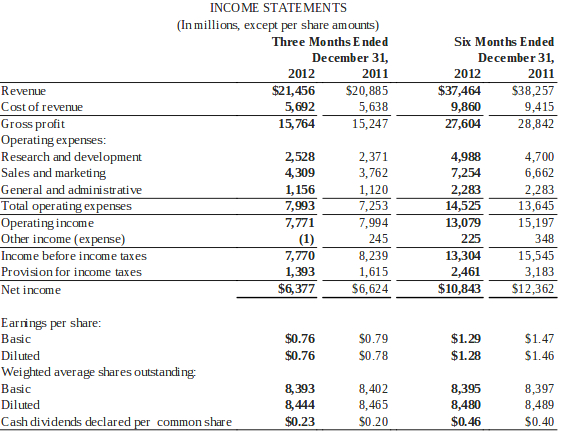

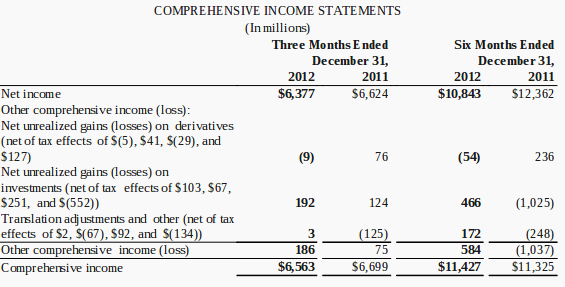

For fiscal second quarter, ended December 31, Microsoft revenue was $21.46 billion, up 3 percent year over year. Operating income: $7.77 billion, a 3 percent decrease. Net income was $6.38 billion, or 76 cents a share.

Average analyst consensus was $21.53 billion revenue and 74 cents earnings per share, for the quarter. Revenue estimates ranged from $19.94 billion to $23.32 billion, with estimated year-over-year growth of 3.1 percent — mighty modest for a holiday quarter when new PC and phone operating systems launched and Microsoft released its first tablet, Surface RT.

Shares dipped by 2 percent in early after-market trading, falling to $27.06 from the $27.63 close. Like Apple yesterday, Microsoft beat earnings consensus but missed on sales.

When adjusting for the impact of Office and Windows upgrade offers (meaning non-GAAP view), revenue grew by 5 percent to $22 billion, operating income by 4 percent to $8.3 billion, and EPS by 4 percent to 81 cents.

“Our big, bold ambition to reimagine Windows as well as launch Surface and Windows Phone 8 has sparked growing enthusiasm with our customers and unprecedented opportunity and creativity with our partners and developers”, CEO Steve Ballmer boasts. “With new Windows devices, including Surface Pro, and the new Office on the horizon, we’ll continue to drive excitement for the Windows ecosystem and deliver our software through devices and services people love and businesses need”.

Windows & Windows Live revenue rose 24 percent year over year to $5.88 billion, buoyed by a deferral from the previous quarter. Without the extra lift, revenue still increased by 11 percent.

Microsoft’s Perception Problem

As I’ve oft said, in business, perception is everything. To many people, Windows is Microsoft and the fate of one influences the other. Perception is a devil. Take Apple, for example, which reported $54.5 billion revenue and $13.06 billion net income yesterday. Today, shares closed down 12.35 percent, in part on perception that growth days are over, despite simply huge quarterly numbers. Microsoft’s problem is by no means comparable, but Apple’s situation makes a point. If investors so punish the company for such a great quarter, what can negative or positive perceptions about Windows’ future do?

Microsoft is no longer bound to Windows, despite marketing hype about “reimagining”. In October, CEO Steve Ballmer described the company’s new direction as “devices and services“. The Business division, with flagship Office, generally generates more revenue than Windows & Windows Live, and last quarter Server & Tools did, too. The company is in process of removing dependence on Windows as top to its hugely successful vertical applications stack built around Office and server software and now extended through cloud services, such as 365, Azure, Skype and SkyDrive among many others. Windows is still hugely valuable, and anchors a huge ecosystem, but Microsoft can transcend the OS.

The problem: Public sentiment says something else — that Windows can’t compete in the post-PC, what I call connected-devices, era. If Windows can’t, neither can Microsoft. I don’t agree. Microsoft’s apps, datacenter and server software already are primed to serve multiple devices — not just the PC — and that’s a longstanding development strategy now far advanced. Microsoft is ready to move beyond Windows, and the holiday quarter PC shipments show such a future is inevitable. Windows won’t go away but stand alongside other platforms rather than being the overwhelmingly dominant one.

Business and Server & Tools succeed for many reasons, and they will continue to do so as long as enterprises stay the course buying annuity contracts. Combined, more than half the two groups’ revenues come from volume-licensing contracts with Software Assurance.

Companies get the license and annually pay 25 percent or 29 percent of the full price to get upgrades (or even to exercise downgrade rights) over two- or three-year periods. Software Assurance insulates Microsoft from economies’ ups and downs and those for PC purchases. If ever businesses back away in mass from annuity or subscription contracts, that’s the day to seriously worry about Microsoft’s future.

Whither Windows 8

Where the flagship operating system matters most is where Microsoft tries to take it: touchscreen devices, such as hybrids and tablets, with Surface RT and Pro serving as reference-designs for OEM partners to emulate. The Redmond, Wash.-based company announced plans to port Windows to ARM processors in January 2011, then followed up with the tile-based Modern UI that unifies ARM and x86 operating systems, including Windows Phone. Pundits poo-poo PC shipments, which stank in Q4, as evidence Surface and Windows 8 are failures. I ask: By what measure? Seems to me, Microsoft already changed Windows’ course to embrace a broader range of devices, with a unifying UI. Transitions like this take time to succeed, or fail.

There is need. Had Microsoft not made-over Windows, the problem wouldn’t be perception but crisis very real. Three legs support the profit center, and Windows bound to traditional PCs would be one cut off. Instead, Windows 8 holds future device promise. Much depends on the devices the company and its partners produce and apps and services supporting them. Honestly, looking at holiday PC lineup, Surface RT is about the only thing looking good. OEMs failed to deliver compelling products that get people buying.

PCs continued their more-than-year-long collapse during fourth quarter. Windows 8 gave no meaningful lift. Shipments fell 4.9 percent year over year, according to Gartner. For all 2012: down 3.5 percent. Manufacturers shipped 90.3 million and 352.7 million units for the respective time periods. IDC offers grimmer perspective: PC shipments fell 6.4 percent for Q4 — two points more than forecast — and 3.2 percent for the year.

Consumers aren’t buying Windows PCs like they used to, and their infatuation with iPad, some other tablets and smartphones, is spreading. “Tablets have dramatically changed the device landscape for PCs, not so much by ‘cannibalizing’ PC sales, but by causing PC users to shift consumption to tablets rather than replacing older PCs”, Mikako Kitagawa, Gartner principal analyst, says, speaking about Q4 PC shipments.

She no longer believes that PCs and tablets will coexist for a meaningful time. “There will be some individuals who retain both, but we believe they will be exception and not the norm. Therefore, we hypothesize that buyers will not replace secondary PCs in the household, instead allowing them to age out and shifting consumption to a tablet”.

Combine that with the bring-your-own-device (BYOD) to work movement, and the future looks grim. Or does it? Microsoft already has its apps, cloud and server businesses primed for BYOD, as I’ve written here before. Then there is broader context, for those calling Windows 8 a flop because PC shipments fell in Q4. Apple got hit, too. Analyst consensus was for 5.2 million Macs shipped, but only 4.1 million did. Yesterday, Apple CEO Tim Cook laid blame on late delivery of new iMacs. He said that Mac shipments would have been otherwise higher.

I don’t find that credible. But let’s assume for a moment he’s right. iMac is an expensive beast, starting at 1,299 and selling for as much as $1,999 in standard config. If Apple can command such selling strength, why can’t Microsoft OEMs? They should, by releasing innovative hybrid desktop and portable designs that capitalize on Windows 8’s best features. I contend they did not in fourth quarter.

Something else to consider when looking at the PC market and future of Windows. In a compelling rebuttal to claims the PC is dying, Derrick Wlodarz, who owns a computer repair business, makes an observation often ignored: “Whereas customers of mine were getting 3-4 years out of machines back in the early 2000s, they now push their PCs to 4-6 year replacement cycles without much sweat”. Major reason: Older hardware has more than enough processor and graphics power to meet modern needs.

“Now, a Windows Vista or newer PC could likely run on for five, six, seven or possibly more years without much issue. And this is the untold trend that I see in my customer base”, he asserts. “Solid, secure operating systems installed onto well-engineered computer hardware equals a darn long system life”.

So if the PC in the den or office is good enough and not in need of replacement, why not buy a new smartphone or tablet? Remember: Microsoft pushes ahead with solutions for both these categories. Then there is fourth quarter to consider, where Windows delivered solid growth — now if only the broader ecosystem could capitalize upon it.

Division Highlights

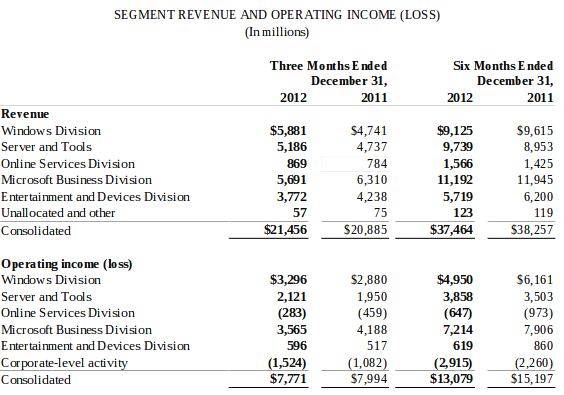

Microsoft reports revenue and earnings results for five divisions: Windows & Windows Live, Server & Tools, Business, Online Services and Entertainment & Devices.

Windows & Windows Live. Revenue soared 24 percent year over year despite weak PC sales, surely buoyed by low-cost Windows Pro upgrade price that ends January 31. A $622 million deferral helped lift revenue to $5.88 billion. Without it, revenue would have grown by 11 percent.

To date Microsoft has sold 60-million Windows 8 licenses.

OEM revenue grew by 17 percent, outpacing the broader PC market.

Server & Tools. “We see strong momentum in our enterprise business”, Microsoft COO Kevin Turner says. “With the launch of SQL Server 2012 and Windows Server 2012, we continue to see healthy growth in our data platform and infrastructure businesses and win share from our competitors. With the coming launch of the new Office, we will provide a cloud-enabled suite of products that will deliver unparalleled productivity and flexibility”.

Revenue rose 9 percent, or $347 million, to $5.19 billion. As previously mentioned, the division is insulated against economic maladies, because about 50 percent of revenues come from contractual volume-licensing agreements.

New bookings increased by 15 percent. Meanwhile System Center revenue grew by 18 percent and SQL Server by 16 percent.

Business. “We saw strong growth in our enterprise business driven by multi-year commitments to the Microsoft platform, which positions us well for long-term growth”, Microsoft CFO Peter Klein says. “Multi-year licensing revenue grew double-digits across Windows, Server & Tools, and the Microsoft Business Division”.

Despite touted growth, revenue fell by 10 percent year over year to $5.69 billion. However, when removing adjustments for Office upgrade offer and pre-sales, revenue grew by 3 percent.

Bookings increased by 18 percent and multi-year licensing by 10 percent. However, consumer revenue fell by 2 percent.

Like Server & Tools, Business division is largely insulated against sluggish PC sales. Sixty percent of revenue comes from annuity licensing to businesses.

Online Services Business. Online services revenue rose by 10 percent, or $109 million, to $823 million. However, the division remains unprofitable. Search and display ads drove up online advertising revenue by 15 percent.

Entertainment & Devices. Microsoft shipped 5.2 million Xbox consoles, down from 8.2 million a holiday quarter earlier. As a platform, Xbox 360 revenue fell 29 percent, or $1.1 billion.

Xbox Live subscriptions now exceed 40 million.

Windows Phone sales are up 4 times year over year, which is a polite way of saying they’re not good enough. If they were, Microsoft would say how many.

Users made 138 billion Skype calls, up 59 percent year over year.