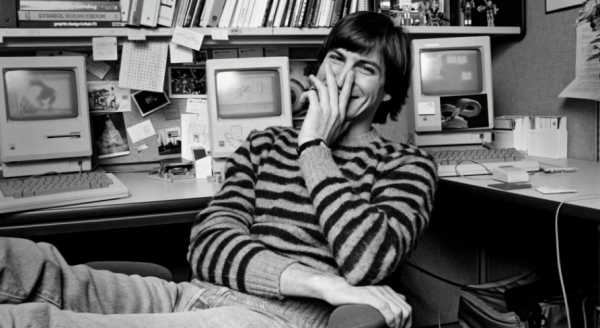

Sixteenth in a series. Robert X. Cringely’s tome Accidental Empires takes on a startling prescient tone in this next installment. Remember as you read that the book published in 1991. Much he writes here about Apple cofounder Steve Jobs is remarkably insightful from the context of looking back. Some portions foreshadow the future — or one possible outcome — when looking at Apple following Jobs’ ouster in 1985 and the company now following his death.

The most dangerous man in Silicon Valley sits alone on many weekday mornings, drinking coffee at II Fornaio, an Italian restaurant on Cowper Street in Palo Alto. He’s not the richest guy around or the smartest, but under a haircut that looks as if someone put a bowl on his head and trimmed around the edges, Steve Jobs holds an idea that keeps some grown men and women of the Valley awake at night. Unlike these insomniacs, Jobs isn’t in this business for the money, and that’s what makes him dangerous.

I wish, sometimes, that I could say this personal computer stuff is just a matter of hard-headed business, but that would in no way account for the phenomenon of Steve Jobs. Co-founder of Apple Computer and founder of NeXT Inc., Jobs has literally forced the personal computer industry to follow his direction for fifteen years, a direction based not on business or intellectual principles but on a combination of technical vision and ego gratification in which both business and technical acumen played only small parts.

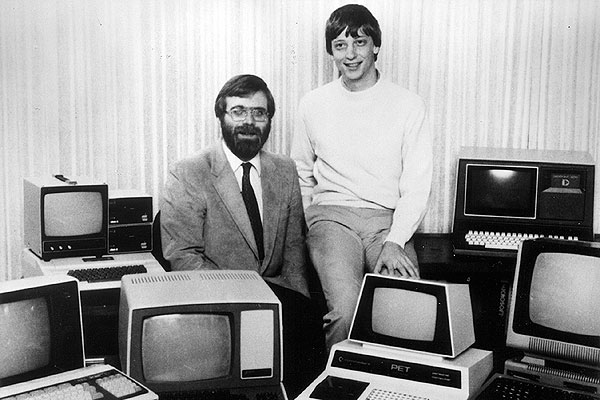

Steve Jobs sees the personal computer as his tool for changing the world. I know that sounds a lot like Bill Gates, but it’s really very different. Gates sees the personal computer as a tool for transferring every stray dollar, deutsche mark, and kopeck in the world into his pocket. Gates doesn’t really give a damn how people interact with their computers as long as they pay up. Jobs gives a damn. He wants to tell the world how to compute, to set the style for computing.

Bill Gates has no style; Steve Jobs has nothing but style.

A friend once suggested that Gates switch to Armani suits from his regular plaid shirt and Levis Dockers look. “I can’t do that,” Bill replied. “Steve Jobs wears Armani suits.”

Think of Bill Gates as the emir of Kuwait and Steve Jobs as Saddam Hussein.

Like the emir, Gates wants to run his particular subculture with an iron hand, dispensing flawed justice as he sees fit and generally keeping the bucks flowing in, not out. Jobs wants to control the world. He doesn’t care about maintaining a strategic advantage; he wants to attack, to bring death to the infidels. We’re talking rivers of blood here. We’re talking martyrs. Jobs doesn’t care if there are a dozen companies or a hundred companies opposing him. He doesn’t care what the odds are against success. Like Saddam, he doesn’t even care how much his losses are. Nor does he even have to win, if, by losing the mother of all battles he can maintain his peculiar form of conviction, still stand before an adoring crowd of nerds, symbolically firing his 9 mm automatic into the air, telling the victors that they are still full of shit.

You guessed it. By the usual standards of Silicon Valley CEOs, where job satisfaction is measured in dollars, and an opulent retirement by age 40 is the goal, Steve Jobs is crazy.

Apple Computer was always different. The company tried hard from the beginning to shake the hobbyist image, replacing it with the idea that the Apple II was an appliance but not just any appliance; it was the next great appliance, a Cuisinart for the mind. Apple had the five-color logo and the first celebrity spokesperson: Dick Cavett, the thinking person’s talk show host.

Alone among the microcomputer makers of the 1970s, the people of Apple saw themselves as not just making boxes or making money; they thought of themselves as changing the world.

Atari wasn’t changing the world; it was in the entertainment business. Commodore wasn’t changing the world; it was just trying to escape from the falling profit margins of the calculator market while running a stock scam along the way. Radio Shack wasn’t changing the world; it was just trying to find a new consumer wave to ride, following the end of the CB radio boom. Even IBM, which already controlled the world, had no aspirations to change it, just to wrest some extra money from a small part of the world that it had previously ignored.

In contrast to the hardscrabble start-ups that were trying to eke out a living selling to hobbyists and experimenters, Apple was appealing to doctors, lawyers, and middle managers in large corporations by advertising on radio and in full pages of Scientific American. Apple took a heroic approach to selling the personal computer and, by doing so, taught all the others how it should be done.

They were heroes, those Apple folk, and saw themselves that way. They were more than a computer company. In fact, to figure out what was going on in the upper echelons in those Apple II days, think of it not as a computer company at all but as an episode of “Bonanza.”

(Theme music, please.)

Riding straight off the Ponderosa’s high country range every Sunday night at nine was Ben Cartwright, the wise and supportive father, who was willing to wield his immense power if needed. At Apple, the part of Ben was played by Mike Markkula.

Adam Cartwright, the eldest and best-educated son, who was sophisticated, cynical, and bossy, was played by Mike Scott. Hoss Cartwright, a good-natured guy who was capable of amazing feats of strength but only when pushed along by the others, was played by Steve Wozniak. Finally, Little Joe Cartwright, the baby of the family who was quick with his mouth, quick with his gun, but was never taken as seriously as he wanted to be by the rest of the family, was played by young Steve Jobs.

The series was stacked against Little Joe. Adam would always be older and more experienced. Hoss would always be stronger. Ben would always have the final word. Coming from this environment, it was hard for a Little Joe character to grow in his own right, short of waiting for the others to die. Steve Jobs didn’t like to wait.

By the late 1970s, Apple was scattered across a dozen one- and two-story buildings just off the freeway in Cupertino, California. The company had grown to the point where, for the first time, employees didn’t all know each other on sight. Maybe that kid in the KOME T-shin who was poring over the main circuit board of Apple’s next computer was a new engineer, a manufacturing guy, a marketer, or maybe he wasn’t any of those things and had just wandered in for a look around. It had happened before. Worse, maybe he was a spy for the other guys, which at that time didn’t mean IBM or Compaq but more likely meant the start-up down the street that was furiously working on its own microcomputer, which its designers were sure would soon make the world forget that there ever was a company called Apple.

Facing these realities of growth and competition, the grownups at Apple — Mike Markkula, chairman, and Mike Scott, president –decided that ID badges were in order. The badges included a name and an individual employee number, the latter based on the order in which workers joined the company. Steve Wozniak was declared employee number 1, Steve Jobs was number 2, and so on.

Jobs didn’t want to be employee number 2. He didn’t want to be second in anything. Jobs argued that he, rather than Woz, should have the sacred number 1 since they were co-founders of the company and J came before W in the alphabet. It was a kid’s argument, but then Jobs, who was still in his early twenties, was a kid. When that plan was rejected, he argued that the number 0 was still unassigned, and since 0 came before 1, Jobs would be happy to take that number. He got it.

Steve Wozniak deserved to be considered Apple’s number 1 employee. From a technical standpoint, Woz literally was Apple Computer. He designed the Apple II and wrote most of its system software and its first BASIC interpreter. With the exception of the computer’s switching power supply and molded plastic case, literally every other major component in the Apple II was a product of Wozniak’s mind and hand.

And in many ways, Woz was even Apple’s conscience. When the company was up and running and it became evident that some early employees had been treated more fairly than others in the distribution of stock, it was Wozniak who played the peacemaker, selling cheaply 80,000 of his own Apple shares to employees who felt cheated and even to those who just wanted to make money at Woz’s expense.

Steve Jobs’s roles in the development of the Apple II were those of purchasing agent, technical gadfly, and supersalesman. He nagged Woz into a brilliant design performance and then took Woz’s box to the world, where through sheer force of will, this kid with long hair and a scraggly beard imprinted his enthusiasm for the Apple II on thousands of would-be users met at computer shows. But for all Jobs did to sell the world on the idea of buying a microcomputer, the Apple II would always be Wozniak’s machine, a fact that might have galled employee number 0, had he allowed it to. But with the huckster’s eternal optimism, Jobs was always looking ahead to the next technical advance, the next computer, determined that that machine would be all his.

Jobs finally got the chance to overtake his friend when Woz was hurt in the February 1981 crash of his Beechcraft Bonanza after an engine failure taking off from the Scotts Valley airport. With facial injuries and a case of temporary amnesia, Woz was away from Apple for more than two years, during which he returned to Berkeley to finish his undergraduate degree and produced two rock festivals that lost a combined total of nearly $25 million, proving that not everything Steve Wozniak touched turned to gold.

Another break for Jobs came two months after Woz’s airplane crash, when Mike Scott was forced out as Apple president, a victim of his own ruthless drive that had built Apple into a $300 million company. Scott was dogmatic. He did stupid things like issuing edicts against holding conversations in aisles or while standing. Scott was brusque and demanding with employees (“Are you working your ass off?” he’d ask, glaring over an office cubicle partition). And when Apple had its first-ever round of layoffs, Scott handled them brutally, pushing so hard to keep momentum going that he denied the company a chance to mourn its loss of innocence.

Scott was a kind of clumsy parent who tried hard, sometimes too hard, and often did the wrong things for the right reasons. He was not well suited to lead the $1 billion company that Apple would soon be.

Scott had carefully thwarted the ambitions of Steve Jobs. Although Jobs owned 10 percent of Apple, outside of purchasing (where Scott still insisted on signing the purchase orders, even if Jobs negotiated the terms), he had little authority.

Mike Markkula fired Scott, sending the ex-president into a months-long depression. And it was Markkula who took over as president when Scott left, while Jobs slid into Markkula’s old job as chairman. Markkula, who’d already retired once before, from Intel, didn’t really want the president’s job and in fact had been trying to remove himself from day-to-day management responsibility at Apple. As a president with retirement plans, Markkula was easier-going than Scott had been and looked much more kindly on Jobs, whom he viewed as a son.

Every high-tech company needs a technical visionary, someone who has a clear idea about the future and is willing to do whatever it takes to push the rest of the operation in that direction. In the earliest days of Apple, Woz was the technical visionary along with doing nearly everything else. His job was to see the potential product that could be built from a pile of computer chips. But that was back when the world was simpler and the paradigm was to bring to the desktop something that emulated a mainframe computer terminal. After 1981, Woz was gone, and it was time for someone else to take the visionary role. The only people inside Apple who really wanted that role were Jef Raskin and Steve Jobs.

Raskin was an iconoclastic engineer who first came to Apple to produce user manuals for the Apple II. His vision of the future was a very basic computer that would sell for around $600 — a computer so easy to use that it would require no written instructions, no user training, and no product support from Apple. The new machine would be as easy and intuitive to use as a toaster and would be sold at places like Sears and K-Mart. Raskin called his computer Macintosh.

Jobs’s ambition was much grander. He wanted to lead the development of a radical and complex new computer system that featured a graphical user interface and mouse (Raskin preferred keyboards). Jobs’s vision was code-named Lisa.

Depending on who was talking and who was listening, Lisa was either an acronym for “large integrated software architecture,” or for “local integrated software architecture” or the name of a daughter born to Steve Jobs and Nancy Rogers in May 1978. Jobs, the self-centered adoptee who couldn’t stand competition from a baby, at first denied that he was Lisa’s father, sending mother and baby for a time onto the Santa Clara County welfare rolls. But blood tests and years later, Jobs and Lisa, now a teenager, are often seen rollerblading on the streets of Palo Alto. Jobs and Rogers never married.

Lisa, the computer, was born after Jobs toured Xerox PARC in December 1979, seeing for the first time what Bob Taylor’s crew at the Computer Science Lab had been able to do with bitmapped video displays, graphical user interfaces, and mice. “Why aren’t you marketing this stuff?” Jobs asked in wonderment as the Alto and other systems were put through their paces for him by a PARC scientist named Larry Tesler. Good question.

Steve Jobs saw the future that day at PARC and decided that if Xerox wouldn’t make that future happen, then he would. Within days, Jobs presented to Markkula his vision of Lisa, which included a 16-bit microprocessor, a bit-mapped display, a mouse for controlling the on-screen cursor, and a keyboard that was separate from the main computer box. In other words, it was a Xerox Alto, minus the Alto’s built-in networking. “Why would anyone need an umbilical cord to his company?” Jobs asked.

Lisa was a vision that made the as-yet-unconceived IBM PC look primitive in comparison. And though he didn’t know it at the time, it was also a development job far bigger than Steve Jobs could even imagine.

One of the many things that Steve Jobs didn’t know in those days was Cringely’s Second Law, which I figured out one afternoon with the assistance of a calculator and a six-pack of Heineken. Cringely’s Second Law states that in computers, ease of use with equivalent performance varies with the square root of the cost of development. This means that to design a computer that’s ten times easier to use than the Apple II, as the Lisa was intended to be, would cost 100 times as much money. Since it cost around $500,000 to develop the Apple II, Cringely’s Second Law says the cost of building the Lisa should have been around $50 million. It was.

Let’s pause the history for a moment and consider the implications of this law for the next generation of computers. There was no significant difference in ease of use between Lisa and its follow-on, the Macintosh. So if you’ve been sitting on your hands waiting to buy a computer that is ten times as easy to use as the Macintosh, remember that it’s going to cost around $5 billion (1982 dollars, too) to develop. Apple’s R&D budget is about $500 million, so don’t expect that computer to come from Cupertino. IBM’s R&D budget is about $3 billion, but that’s spread across many lines of computers, so don’t expect your ideal machine to come from Big Blue either. The only place such a computer is going to come from, in fact, is a collaboration of computer and semiconductor companies. That’s why the computer world is suddenly talking about Open Systems, because building hardware and software that plug and play across the product lines and R&D budgets of a hundred companies is the only way that future is going to be born. Such collaboration, starting now, will be the trend in the next century, so put your wallet away for now.

Meanwhile, back in Cupertino, Mike Markkula knew from his days working in finance at Intel just how expensive a big project could become. That’s why he chose John Couch, a software professional with a track record at Hewlett-Packard, to head the super-secret Lisa project. Jobs was crushed by losing the chance to head the realization of his own dream.

Couch was yet another Adam Cartwright, and Jobs hated him.

The new ideas embodied in Lisa would have been Jobs’s way of breaking free from his type casting as Little Joe. He would become, instead, the prophet of a new kind of computing, taking his power from the ideas themselves and selling this new type of computing to Apple and to the rest of the world. And Apple accepted both his dream and the radical philosophy behind it, which said that technical leadership was as important as making money, but Markkula still wouldn’t let him lead the project.

Vision, you’ll recall, is the ability to see potential in the work of others. The jump from having vision to being a visionary; though, is a big one. The visionary is a person who has both the vision and the willingness to put everything on the line, including his or her career, to further that vision. There aren’t many real visionaries in this business, but Steve Jobs is one. Jobs became the perfect visionary, buying so deeply into the vision that he became one with it. If you were loyal to Steve, you embraced his vision. If you did not embrace his vision, you were either an enemy or brain-dead.

So Chairman Jobs assigned himself to Raskin’s Macintosh group, pushed the other man aside, and converted the Mac into what was really a smaller, cheaper Lisa. As the holder of the original Lisa vision, Jobs ignorantly criticized the big-buck approach being taken by Couch and Larry Tesler, who had by then joined Apple from Xerox PARC to head Lisa software development. Lisa was going to be too big, too slow, too expensive, Jobs argued. He bet Couch $5,000 that Macintosh would hit the market first. He lost.

The early engineers were nearly all gone from Apple by the time Lisa development began. The days when the company ran strictly on adrenalin and good ideas were fading. No longer did the whole company meet to put computers in boxes so they could ship enough units by the end of the month. With the introduction of[ the Apple III in 1980, life had become much more businesslike at Apple, which suddenly had two product lines to sell.

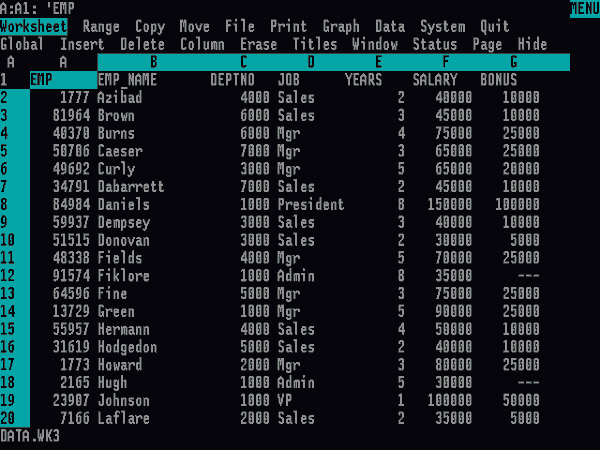

It was still the norm, though, for technical people to lead each product development effort, building products that they wanted to play with themselves rather than products that customers wanted to buy. For example, there was Mystery House, Apple’s own spreadsheet, intended to kill VisiCalc because everyone who worked on Apple II software decided en masse that they hated Terry Opdendyk, president of VisiCorp, and wanted to hurt him by destroying his most important product. There was no real business reason to do Mystery House, just spite. The spreadsheet was written by Woz and Randy Wigginton and never saw action under the Apple label because it was given up later as a bargaining chip in negotiations between Apple and Microsoft. Some Mystery House code lives on today in a Macintosh spreadsheet from Ashton-Tate called Full Impact.

But John Couch and his Lisa team were harbingers of a new professionalism at Apple. Apple had in Lisa a combination of the old spirit of Apple — anarchy, change, new stuff, engineers working through the night coming up with great ideas — and the introduction of the first nontechnical marketers, marketers with business degrees — the “suits.” These nontechnical marketers were, for the first time at Apple, the project coordinators, while the technical people were just members of the team. And rather than the traditional bunch of hackers from Homestead High, Lisa hardware was developed by a core of engineers hired away from Hewlett-Packard and DEC, while the software was developed mainly by ex-Xerox programmers, who were finally getting a chance to bring to market a version of what they’d worked on at Xerox PARC for most of the preceding ten years. Lisa was the most professional operation ever mounted at Apple — far more professional than anything that has followed.

Lisa was ahead of its time. When most microcomputers came with a maximum of 64,000 characters of memory, the Lisa had 1 million characters. When most personal computers were capable of doing only one task at a time, Lisa could do several. The computer was so easy to use that customers were able to begin working within thirty minutes of opening the box. Setting up the system was so simple that early drafts of the directions used only pictures, no words. With its mouse, graphical user interface, and bit-mapped screen, Lisa was the realization of nearly every design feature invented at Xerox PARC except networking.

Lisa was professional all the way. Painstaking research went into every detail of the user interface, with arguments ranging up and down the division about what icons should look like, whether on-screen windows should just appear and disappear or whether they should zoom in and out. Unlike nearly every other computer in the world, Lisa had no special function keys to perform complex commands in a single keystroke, and offered no obscure ways to hold down three keys simultaneously and, by so doing, turn the whole document into Cyrillic, or check its spelling, or some other such nonsense.

To make it easy to use, Lisa followed PARC philosophy, which meant that no matter what program you were using, hitting the E key just put an E on-screen rather than sending the program into edit mode, or expert mode, or erase mode. Modes were evil. At PARC, you were either modeless or impure, and this attitude carried over to Lisa, where Larry Tesler’s license plate read no modes. Instead of modes, Lisa had a very simple keyboard that was used in conjunction with the mouse and onscreen menus to manipulate text and graphics without arcane commands.

Couch left nothing to chance. Even the problem of finding a compelling application for Lisa was covered; instead of waiting for a Dan Bricklin or a Mitch Kapor to introduce the application that would make corporate America line up to buy Lisas, Apple wrote its own software — seven applications covering everything that users of microcomputers were then doing with their machines, including a powerful spreadsheet.

Still, when Lisa hit the market in 1983, it failed. The problem was its $10,000 price, which meant that Lisa wasn’t really a personal computer at all but the first real workstation. Workstations can cost more than PCs because they are sold to companies rather than to individuals, but they have to be designed with companies in mind, and Lisa wasn’t. Apple had left out that umbilical cord to the company that Steve Jobs had thought unnecessary. At $10,000, Lisa was being sold into the world of corporate mainframes, and the Apple’s inability to communicate with those mainframes doomed it to failure.

Despite the fact that Lisa had been his own dream and Apple was his company, Steve Jobs was thrilled with Lisa’s failure, since it would make the inevitable success of Macintosh all the more impressive.

Back in the Apple II and Apple III divisions, life still ran at a frenetic pace. Individual contributors made major decisions and worked on major programs alone or with a very few other people. There was little, if any, management, and Apple spent so much money, it was unbelievable. With Raskin out of the way, that’s how Steve Jobs ran the Macintosh group too. The Macintosh was developed beneath a pirate flag. The lobby of the Macintosh building was lined with Ansel Adams prints, and Steve Jobs’s BMW motorcycle was parked in a corner, an ever-present reminder of who was boss. It was a renegade operation and proud of it.

When Lisa was taken from him, Jobs went through a paradigm shift that combined his dreams for the Lisa with Raskin’s idea of appliancelike simplicity and low cost. Jobs decided that the problem with Lisa was not that it lacked networking capability but that its high price doomed it to selling in a market that demanded networking. There’d be no such problem with Macintosh, which would do all that Lisa did but at a vastly lower price. Never mind that it was technically impossible.

Lisa was a big project, while Macintosh was much smaller because Jobs insisted on an organization small enough that he could dominate every member, bending each to his will. He built the Macintosh on the backs of Andy Hertzfeld, who wrote the system software, and Burrell Smith, who designed the hardware. All three men left their idiosyncratic fingerprints all over the machine. Hertzfeld gave the Macintosh an elegant user interface and terrific look and feel, mainly copied from Lisa. He also made Macintosh very, very difficult to write programs for. Smith was Jobs’s ideal engineer because he’d come up from the Apple II service department (“I made him,” Jobs would say). Smith built a clever little box that was incredibly sophisticated and nearly impossible to manufacture.

Jobs’s vision imposed so many restraints on the Macintosh that it’s a wonder it worked at all. In contrast to Lisa, with its million characters of memory, Raskin wanted Macintosh to have only 64,000 characters — a target that Jobs continued to aim for until long past the time when it became clear to everyone else that the machine needed more memory. Eventually, he “allowed” the machine to grow to 128,000 characters, though even with that amount of memory, the original 128K Macintosh still came to fit people’s expectations that mechanical things don’t work. Apple engineers, knowing that funher memory expansion was inevitable, built in the capability to expand the 128K machine to 512K, though they couldn’t tell Jobs what they had done because he would have made them change it back.

Markkula gave up the presidency of Apple at about the time Lisa was introduced. As chairman, Jobs went looking for a new president, and his first choice was Don Estridge of IBM, who turned the job down. Jobs’s second choice was John Sculley, who came over from PepsiCo for the same package that Estridge had rejected. Sculley was going to be as much Jobs’s creation as Burrell Smith had been. It was clear to the Apple technical staff that Sculley knew nothing at all about computers or the computer business. They dismissed him, and nobody even noticed when Sculley was practically invisible during his first months at Apple. They thought of him as Jobs’s lapdog, and that’s what he was.

With Mike Markkula again in semiretirement, concentrating on his family and his jet charter business, there was no adult supervision in place at Apple, and Jobs ran amok. With total power, the willful kid who’d always resented the fact that he had been adopted, created at Apple a metafamily in which he played the domineering, disrespectful, demanding type of father that he imagined must have abandoned him those many years ago.

Here’s how Steve-As-Dad interpreted Management By Walking Around. Coming up to an Apple employee, he’d say, “I think Jim (another employee] is shit. What do you think?”

If the employee agrees that Jim is shit, Jobs went to the next person and said, “Bob and I think Jim is shit. What do you think?”

If the first employee disagreed and said that Jim is not shit, Jobs would move on to the next person, saying, “Bob and I think Jim is great. What do you think?”

Public degradation played an important role too. When Jobs finally succeeded in destroying the Lisa division, he spoke to the assembled workers who were about to be reassigned or laid off. “I see only B and C players here,” he told the stunned assemblage. “All the A players work for me in the Macintosh division. I might be interested in hiring two or three of you [out of 300]. Don’t you wish you knew which ones I’ll choose?”

Jobs was so full of himself that he began to believe his own PR, repeating as gospel stories about him that had been invented to help sell computers. At one point a marketer named Dan’l Lewin stood up to him, saying, “Steve, we wrote this stuff about you. We made it up.”

Somehow, for all the abuse he handed out, nobody attacked Jobs in the corridor with a fire axe. I would have. Hardly anyone stood up to him. Hardly anyone quit. Like the Bhagwan, driving around Rancho Rajneesh each day in another Rolls-Royce, Jobs kept his troops fascinated and productive. The joke going around said that Jobs had a “reality distortion field” surrounding him. He’d say something, and the kids in the Macintosh division would find themselves replying, “Drink poison Kool-Aid? Yeah, that makes sense.”

Steve Jobs gave impossible tasks, never acknowledging that they were impossible. And, as often happens with totalitarian rulers, most of his impossible demands were somehow accomplished, though at a terrible cost in ruined careers and failed marriages.

Beyond pure narcissism, which was there in abundance, Jobs used these techniques to make sure he was surrounding himself with absolutely the best technical people. The best, nothing but the best, was all he would tolerate, which meant that there were crowds of less-than-godlike people who went continually up and down in Jobs’s estimation, depending on how much he needed them at that particular moment. It was crazy-making.

Here’s a secret to getting along with Steve Jobs: when he screams at you, scream back. Take no guff from him, and if he’s the one who is full of shit, tell him, preferably in front of a large group of amazed underlings. This technique works because it gets Jobs’s attention and fits in with his underlying belief that he probably is wrong but that the world just hasn’t figured that out yet. Make it clear to him that you, at least, know the truth.

Jobs had all kinds of ideas he kept throwing out. Projects would stop. Projects would start. Projects would get so far and then be abandoned. Projects would go on in secret, because the budget was so large that engineers could hide things they wanted to do, even though that project had been canceled or never approved. For example, Jobs thought at one point that he had killed the Apple III, but it went on anyhow.

Steve Jobs created chaos because he would get an idea, start a project, then change his mind two or three times, until people were doing a kind of random walk, continually scrapping and starting over. Apple was confusing suppliers and wasting huge amounts of money doing initial manufacturing steps on products that never appeared.

Despite the fact that Macintosh was developed with a much smaller team than Lisa and it took advantage of Lisa technology, the little computer that was supposed to have sold at K-Mart for $600 ended up costing just as much to bring to market as Lisa had. From $600, the price needed to make a MacProfit doubled and tripled until the Macintosh could no longer be imagined as a home computer. Two months before its introduction, Jobs declared the Mac to be a business computer, which justified the higher price.

Apple clearly wasn’t very disciplined. Jobs created some of that, and a lot of it was created by the fact that it didn’t matter to him whether things were organized. Apple people were rewarded for having great ideas and for making great technical contributions but not for saving money. Policies that looked as if they were aimed at saving money actually had other justifications. Apple people still share hotel rooms at trade shows and company meetings, for example, but that’s strictly intended to limit bed hopping, not to save money. Apple is a very sexy company, and Jobs wanted his people to lavish that libido on the products rather than on each other.

Oh, and Apple people were also rewarded for great graphics; brochures, ads, everything that represented Apple to its customers and dealers, had to be absolutely top quality. In addition, the people who developed Apple’s system of dealers were rewarded because the company realized early on that this was its major strength against IBM.

A very dangerous thing happened with the introduction of the Macintosh. Jobs drove his development team into the ground, so when the Mac was introduced in 1984, there was no energy left, and the team coasted for six months and then fell apart. And during those six months, John Sculley was being told that there were development projects going on in the Macintosh group that weren’t happening. The Macintosh people were just burned out, the Lisa Division was destroyed and its people were not fully integrated into the Macintosh group, so there was no new blood.

It was a time when technical people should have been fixing the many problems that come with the first version of any complex high-tech product. But nobody moved quickly to fix the problems. They were just too tired.

The people who made the Macintosh produced a miracle, but that didn’t mean their code was wonderful. The software development tools to build applications like spreadsheets and word processors were not available for at least two years. Early Macintosh programs had to be written first on a Lisa and then recompiled to run on the Mac. None of this mattered to Jobs, who was in heaven, running Apple as his own private psychology experiment, using up people and throwing them away. Attrition, strangled marriages, and destroyed careers were, unimportant, given the broader context of his vision.

The idea was to have a large company that somehow maintained a start-up philosophy, and Jobs thrived on it. He planned to develop a new generation of products every eighteen months, each one as radically different from the one before as the Macintosh had been from the Apple II. By 1990, nobody would even remember the Macintosh, with Apple four generations down the road. Nothing was sacred except the vision, and it became clear to him that the vision could best be served by having the people of Apple live and work in the same place. Jobs had Apple buy hundreds of acres in the Coyote Valley, south of San Jose, where he planned to be both employer and landlord for his workers, so they’d never ever have a reason to leave work.

Unchecked, Jobs was throwing hundreds of millions of dollars at his dream, and eventually the drain became so bad that Mike Markkula revived his Ben Cartwright role in June 1985. By this point Sculley had learned a thing or two in his lapdog role and felt ready to challenge Jobs. Again, Markkula decided against Jobs, this time backing Sculley in a boardroom battle that led to Jobs’s being banished to what he called “Siberia”— Bandley 6, an Apple building with only one office. It was an office for Steve Jobs, who no longer had any official duties at the company he had founded in his parents’ garage. Jobs left the company soon after.

Here’s what was happening at Apple in the early 1980s that Wall Street analysts didn’t know. For its first five years in business, Apple did not have a budget. Nobody really knew how much money was coming in or going out or what the company was buying. In the earliest days, this wasn’t a problem because a company that was being run by characters who not long before had made $3 per hour dressing up as figures from Alice in Wonderland at a local shopping mall just wasn’t inclined toward extravagance. Later, it seemed that the money was coming in so fast that there was no way it could all be spent. In fact, when the first company budget happened in 1982, the explanation was that Apple finally had enough people and projects where they could actually spend all the money they made if they didn’t watch it. But even when they got a budget, Apple’s budgeting process was still a joke. All budgets were done at the same time, so rather than having product plans from which support plans and service plans would flow — a logical plan based on products that were coming out — everybody all at once just said what they wanted. Nothing was coordinated.

It really wasn’t until 1985 that there was any logical way of making the budget, where the product people would say what products would come out that year, and then the marketing people would say what they were going to do to market these products, and the support people would say how much it was going to cost to support the products.

It took Sculley at least six months, maybe a year, from the time he deposed Jobs to understand how out of control things were. It was total anarchy. Sculley’s major budget gains in the second half of 1985 came from laying off 20 percent of the work force — 1,200 people — and forcing managers to make sense of the number of suppliers they had and the spare parts they had on hand. Apple had millions of dollars of spare parts that were never going to be used, and many of these were sold as surplus. Sculley instituted some very minor changes in 1986 — reducing the number of suppliers and beginning to simplify the peripherals line so that Macintosh printers, for example, would also work with the Apple II, Apple III, and Lisa.

The large profits that Sculley was able to generate during this period came entirely from improved budgeting and from simply cancelling all the whacko projects started by Steve Jobs. Sculley was no miracle worker.

Who was this guy Sculley? Raised in Bermuda, scion of an old-line, old-money family, he trained as an architect, then worked in marketing at PepsiCo for his entire career before joining Apple. A loner, his specialty at the soft drink maker seemed to be corporate infighting, a habit he brought with him to Apple.

Sculley is not an easy man to be with. He is uneasy in public and doesn’t fit well with the casual hacker class that typified the Apple of Woz and Jobs. Spend any time with Sculley and you’ll notice his eyes, which are dark, deep-set, and hawklike, with white visible on both sides of the iris and above it when you look at him straight on. In traditional Japanese medicine, where facial features are used for diagnosis, Sculley’s eyes are called sanpaku and are attributed to an excess of yang. It’s a condition that Japanese doctors associate with people who are prone to violence.

With Jobs gone, Apple needed a new technical visionary. Sculley tried out for the role, and supported people like Bill Atkinson, Larry Tesler, and Jean-Louis Gassee as visionaries, too. He tried to send a message to the troops that everything would be okay, and that wonderful new products would continue to come out, except in many ways they didn’t.

Sculley and the others were surrogate visionaries compared to Jobs. Sculley’s particular surrogate vision was called Knowledge Navigator, mapped out in an expensive video and in his book, Odyssey. It was a goal, but not a product, deliberately set in the far future. Jobs would have set out a vision that he intended his group actually to accomplish. Sculley didn’t do that because he had no real goal.

By rejecting Steve Jobs’s concept of continuous revolution but not offering a specific alternative program in its place, Sculley was left with only the status quo. He saw his job as milking as much money as possible out of the current Macintosh technology and allowing the future to take care of itself. He couldn’t envision later generations of products, and so there would be none. Today the Macintosh is a much more powerful machine, but it still has an operating system that does only one thing at a time. It’s the same old stuff, only faster.

And along the way, Apple abandoned the $1-billion-per-year Apple II business. Steve Jobs had wanted the Apple II to die because it wasn’t his vision. Then Jean-Louis Gassee came in from Apple France and used his background in minicomputers to claim that there really wasn’t a home market for personal computers. Earth to Jean-Louis! Earth to Jean-Louis! So Apple ignored the Macintosh home market to develop the Macintosh business market, and all the while, the company’s market share continued to drop.

Sculley didn’t have a clue about which way to go. And like Markkula, he faded in and out of the business, residing in his distant tower for months at a time while the latest group of subordinates would take their shot at running the company. Sculley is a smart guy but an incredibly bad judge of people, and this failing came to permeate Apple under his leadership.

Sculley falls in love with people and gives them more power than they can handle. He chose Gassee to run Apple USA and the phony-baloney Frenchman caused terrific damage during his tenure. Gassee correctly perceived that engineers like to work on hot products, but he made the mistake of defining “hot” as “high end,” dooming Apple’s efforts in the home and small business markets.

Gassee’s organization was filled with meek sycophants. In his staff meetings, Jean-Louis talked, and everyone else listened. There was no healthy discussion, no wild and crazy brainstorming that Apple had been known for and that had produced the company’s most innovative programs. It was like Stalin’s staff meeting.

Another early Sculley favorite was Allen Loren, who came to Apple as head of management information systems — the chief administrative computer guy — and then suddenly found himself in charge of sales and marketing simply because Sculley liked him. Loren was a good MIS guy but a bad marketing and sales guy.

Loren presided over Apple’s single greatest disaster, the price increase of 1988. In an industry built around the concept of prices’ continually dropping, Loren decided to raise prices on October 1,1988, in an effort to raise Apple’s sinking profit margins. By raising prices Loren was fighting a force of nature, like asking the earth to reverse its direction of rotation, the tides to stop, mothers everywhere to stop telling their sons to get haircuts. Ignorantly, he asked the impossible, and the bottom dropped out of Apple’s market. Sales tumbled, market share tumbled. Any momentum that Apple had was lost, maybe for years, and Sculley allowed that to happen.

Loren was followed as vice-president of marketing by David Hancock, who was known throughout Apple as a blowhard. When Apple marketing should have been trying to recover from Loren’s pricing mistake, the department did little under Hancock. The marketing department was instead distracted by nine reorganizations in less than two years. People were so busy covering their asses that they weren’t working, so Apple’s business in 1989 and 1990 showed what happens when there is no marketing at all.

The whole marketing operation at Apple is now run by former salespeople, a dangerous trend. Marketing is the creation of long-term demand, while sales is execution of marketing strategies. Marketing is buying the land, choosing what crop to grow, planting the crop, fertilizing it, and then deciding when to harvest. Sales is harvesting the crop. Salespeople in general don’t think strategically about the business, and it’s this short-term focus that’s prevalent right now at Apple.

When Apple introduced its family of lower-cost Macintoshes in the fall of 1990, marketing was totally unprepared for their popularity. The computer press had been calling for lower-priced Macs, but nobody inside Apple expected to sell a lot of the boxes. Blame this on the lack of marketing, and also blame it on the demise, two years before, of Apple’s entire market research department, which fell in another political game. When the Macintosh Classic, LC, and Ilsi appeared, their overwhelming popularity surprised, pleased, but then dismayed Apple, which was still staffing up as a company that sold expensive computers. Profit margins dropped despite an 85 percent increase in sales, and Sculley found himself having to lay off 15 percent of Apple’s work force, because of unexpected success that should have been, could have been, planned for.

Sculley’s current favorite is Fred Forsythe, formerly head of manufacturing but now head of engineering, with major responsibility for research and development. Like Loren, Forsythe was good at the job he was originally hired to do, but that does not at all mean he’s the right man for the R&D job. Nor is Sculley, who has taken to calling himself Apple’s Chief Technical Officer– an insult to the company’s real engineers.

So why does Sculley make these terrible personnel moves? Maybe he wants to make sure that people in positions of power are loyal to him, as all these characters are. And by putting them in jobs they are not really up to doing, they are kept so busy that there is no time or opportunity to plot against Sculley. It’s a stupid reason, I know, and one that has cost Apple billions of dollars, but it’s the only one that makes any sense.

With all the ebb and flow of people into and out of top management positions at Apple, it reached the point where it was hard to get qualified people even to accept top positions, since they knew they were likely to be fired. That’s when Sculley started offering signing bonuses. Joe Graziano, who’d left Apple to be the chief financial officer at Sun Microsystems, was lured back with a $1.5 million bonus in 1990. Shareholders and Apple employees who weren’t raking in such big rewards complained about the bonuses, but the truth is that it was the only way Sculley could get good people to work for him. (Other large sums are often counted in “Graz” units. A million and a half dollars is now known as “1 Graz”—a large unit of currency in Applespeak.)

The rest of the company was as confused as its leadership. Somehow, early on, reorganizations — ”reorgs” — became part of the Apple culture. They happen every three to six months and come from Apple’s basic lack of understanding that people need stability in order to be able to work together.

Reorganizations have become so much of a staple at Apple that employees categorize them into two types. There’s the “Flint Center reorganization,” which is so comprehensive that Apple calls its Cupertino workers into the Flint Center auditorium at DeAnza College to hear the top executives explain it. And there’s the smaller “lunchroom reorganization,” where Apple managers call a few departments into a company cafeteria to hear the news.

The problem with reorgs is that they seem to happen overnight, and many times they are handled by groups being demolished and people being told to go to Human Resources and find a new job at Apple. And so the sense is at Apple that if you don’t like where you are, don’t worry, because three to six months from now everything is going to be different. At the same time, though, the continual reorganizations mean that nobody has long-term responsibility for anything. Make a bad decision? Who cares! By the time the bad news arrives, you’ll be gone and someone else will have to handle the problems.

If you do like your job at Apple, watch it, because unless you are in some backwater that no one cares about and is severely understaffed, your job may be gone in a second, and you may be “on the street,” with one or two months to find a job at Apple.

Today, the sense of anomie — alienation, disconnectedness –at Apple is major. The difference between the old Apple, which was crazy, and the new Apple is anomie. People are alienated. Apple still gets the bright young people. They come into Apple, and instead of getting all fired up about something, they go through one or two reorgs and get disoriented. I don’t hear people who are really happy to be at Apple anymore. They wonder why they are there, because they’ve had two bosses in six months, and their job has changed twice. It’s easy to mix up groups and end up not knowing anyone. That’s a real problem.

“I don’t know what will happen with Apple in the long term,” said Larry Tesler. “It all depends on what they do.”

They? Don’t you mean we, Larry? Has it reached the point where an Apple vice-president no longer feels connected to his own company?

With the company in a constant state of reorganization, there is little sense of an enduring commitment to strategy at Apple. It’s just not in the culture. Surprisingly, the company has a commitment to doing good products; it’s the follow-through that suffers. Apple specializes in flashy product introductions but then finds itself wandering away in a few weeks or months toward yet another pivotal strategy and then another.

Compare this with Microsoft, which is just the opposite, doing terrific implementation of mediocre products. For example, in the area of multimedia computing — the hot new product classification that integrates computer text, graphics, sound, and full-motion video — Microsoft’s Multimedia Windows product is ho-hum technology acquired from a variety of sources and not very well integrated, but the company has implemented it very well. Microsoft does a good roll-out, offers good developer support, and has the same people leading the operation for years and years. They follow the philosophy that as long as you are the market leader and are still throwing technology out there, you won’t be dislodged.

Microsoft is taking the Japanese approach of not caring how long or how much money it takes to get multimedia right. They’ve been at it for six years so far, and if it takes another six years, so be it. That’s what makes me believe Microsoft will continue to be a factor in multimedia, no matter how bad its products are.

In contrast to Microsoft, Apple has a very elegant multimedia architecture called QuickTime, which does for time-based media what Apple’s QuickDraw did for graphics. QuickTime has tools for integrating video, animation, and sound into Macintosh programs. It automatically synchronizes sound and images and provides controls for playing, stopping, and editing video sequences. QuickTime includes technology for compressing images so they require far less memory for storage. In short, QuickTime beats the shit out of Microsoft’s Multimedia Extensions for Windows, but Apple is also taking a typical short-term view. Apple produced a flashy intro, but has no sense of enduring commitment to its own strategy.

The good and the bad that was Apple all came from Steve Jobs, who in 1985 was once again an orphan and went off to found another company –NeXT Inc. — and take another crack at playing the father role. Steve sold his Apple stock in a huff (and at a stupidly low price), determined to do it all over again — to build another major computer company — and to do it his way.

“Steve never knew his parents,” recalled Trip Hawkins, who went to Apple as manager of market planning in 1979. “He makes so much noise in life, he cries so loud about everything, that I keep thinking he feels that if he just cries loud enough, his real parents will hear and know that they made a mistake giving him up.”

This is not a big story, but I find it interesting. Last week American Airlines had its reservations computer system, called SABRE, go

This is not a big story, but I find it interesting. Last week American Airlines had its reservations computer system, called SABRE, go

Seventeenth in a series. Love triangles were commonplace during the early days of the PC. Adobe, Apple and Microsoft engaged in such a relationship during the 1980s, and allegiances shifted — oh did they. This installment of Robert X. Cringely’s 1991 classic Accidental Empires shows how important is controlling a standard and getting others to adopt it.

Seventeenth in a series. Love triangles were commonplace during the early days of the PC. Adobe, Apple and Microsoft engaged in such a relationship during the 1980s, and allegiances shifted — oh did they. This installment of Robert X. Cringely’s 1991 classic Accidental Empires shows how important is controlling a standard and getting others to adopt it.

Eleventh in a series. The next installment of Robert X. Cringely’s 1991 tech-industry classic Accidental Empires is highly appropriate for the industry today. He discusses concepts like “look and feel”, how pioneers freely copied ideas and where attitudes began to change. There’s something prescient, with respect to aggressive patent litigation by Apple and some other companies today.

Eleventh in a series. The next installment of Robert X. Cringely’s 1991 tech-industry classic Accidental Empires is highly appropriate for the industry today. He discusses concepts like “look and feel”, how pioneers freely copied ideas and where attitudes began to change. There’s something prescient, with respect to aggressive patent litigation by Apple and some other companies today.