By Scott M. Fulton, III, Betanews

Last Tuesday, in a conference that included invited members of the press including Betanews, Markham Erickson, the Executive Director of the Open Internet Coalition — which advocates for Google, Facebook, PayPal, Netflix, Skype, Sony, Twitter, Amazon, and TiVo, among others — urged the Federal Communications Commission to bounce back from its loss to Comcast last week in DC Circuit Court, by affirming its right to regulate broadband Internet services under a different section of US telecommunications law than it’s used before.

Since that time, a surprising amount of water has passed under the bridge, including a round of Senate hearings Wednesday in which leaders suggested new legislation could solve the problem, so that the FCC would not have to declare regulatory authority under Title II of the Telecommunications Act — the part that typically applies to telephone networks. A key Senate Republican, Kay Bailey Hutchison (R – Texas), vowed to oppose any effort by the FCC to redeclare under Title II.

Then in a public letter posted to many online political sites on Wednesday, including The Hill, Commerce Committee member and former presidential candidate Sen. John Kerry (D – Mass.) called upon ordinary citizens to act on the FCC’s behalf. In an extraordinary bit of irony, he asked interested parties to call their congressperson and urge her to not do anything…the level of inaction necessary to enable the FCC to redeclare its broadband mission, unencumbered by lawmakers.

The details get a little technical, but it basically boils down to this: back in the Bush Administration, the FCC classified the Internet as an “information service” rather than a “communications service.” This limits what the FCC can do, which is, of course, just the way the big telecom companies want it.

But the FCC could reclassify the service and preserve its traditional role. The telecom companies are giving it everything they’ve got to keep this from happening, and if you don’t speak up, they could win.

A win for them would mean that the FCC couldn’t protect Net Neutrality, so the telecoms could throttle traffic as they wish — it would be at their discretion. The FCC couldn’t help disabled people access the Internet, give public officials priority access to the network in times of emergency, or implement a national broadband plan to improve the deplorable situation where the United States — the country that invented the Internet — lags far behind in our broadband infrastructure. In short, it would take away a key check on the power of phone and cable corporations to do whatever they want with our Internet.

The telecom companies try to say that only Congress can pass a law to make this better. But having suffered through a year of record filibusters and procedural hurdles to grind the process to a halt, do you really think it’s a good idea for Congress to try and do this, when the FCC can have the authority right now?

Look, eventually we may need to build a new legal framework for broadband service, but the Internet is moving too fast, the economy needs the innovation of the Internet too badly, to wait. Especially because we don’t have to. The FCC can act right now.

Throwing monkey wrenches into the process since Sen. Kerry’s plea, two former FCC chairmen, in an interview with the Washington Post‘s Cecelia Kang, both advised the agency they led not to attempt reclassification, as well as to start deciding what powers of broadband regulation the FCC should not have, by definition. Under existing laws, the former chairmen agreed, the FCC could still implement the current chairman’s Broadband Plan.

“The Broadband Plan is the first comprehensive plan for rolling out communications and media services to all Americans, the first in the history of our country,” former FCC chairman Reed Hundt told the Post. “We’ve always approached this task, for better or worse, on a piecemeal basis. Now we have a complete plan…The FCC has plenty of jurisdictional power under existing statutes to implement those plans. Exactly which one is the one that will get through the very, very difficult passageway that is always the Court of Appeals, I don’t know. That’s too hard for me, but they have plenty of powers. There’s no question about that. Congress does not need to pass a law to have the Broadband Plan to go forth and be put into place, and the same thing is true with respect to net neutrality.”

Then as if there weren’t enough fuel for this fire, Washington-based Internet policy advocate (and former ZDNet blogger) George Ou argued that even if the FCC were to redeclare broadband a telecom service under Title II, it would still lack the ability to tell Comcast, for instance, to stop throttling its Internet traffic.

“Up until a 2005 when the FCC reclassified wireline transport into an ‘Information Service’ under Title I, Title II Common Carrier requirements had only applied to the underlying DSL transport (the physical telephone wiring and the DSL head-end switches called DSLAMs) which were labeled as ‘Telecommunications Services,’” Ou wrote on Wednesday. “Title II classification required the Telecoms to share their DSL transport infrastructure with competing ISPs, and it gave the FCC the authority to regulate wholesale transport prices. But even before the 2005 reclassification, Title II had only applied to the transport and not the Internet Services riding on top of that transport. That means the entire discussion on Title II is irrelevant to the Comcast/BitTorrent case since that was an issue at the Internet service level and not transport.”

Betanews discussed all these events happening in the interim with the leader of Tuesday’s press conference, Open Internet Coalition Executive Director Markham Erickson, also founding partner of the Washington law firm Holch & Erickson LLP.

MARKHAM ERICKSON, OIC: I think George is right with point #1, that the DC Circuit didn’t obliterate the concept of ancillary authority; that legal theory still exists. The court just further described what they think ancillary authority means. It means that anything you’re doing under Title I has to be tied to a specific statutory mandate under Titles II, III, or VI of the Communications Act; and that what the FCC was doing in the Comcast decision — relying primarily on Section 706 and 230 of the Communications Act — neither of those sections provided a statutory mandate, and they were mere policy statements rather than statutory mandates.

MARKHAM ERICKSON, OIC: I think George is right with point #1, that the DC Circuit didn’t obliterate the concept of ancillary authority; that legal theory still exists. The court just further described what they think ancillary authority means. It means that anything you’re doing under Title I has to be tied to a specific statutory mandate under Titles II, III, or VI of the Communications Act; and that what the FCC was doing in the Comcast decision — relying primarily on Section 706 and 230 of the Communications Act — neither of those sections provided a statutory mandate, and they were mere policy statements rather than statutory mandates.

The DC Circuit then went further to talk about several other provisions of the Communications Act that they didn’t think gave the FCC enough of a statutory hook to utilize a theory of ancillary authority. So while they left open some room for ancillary authority, it’s very narrow room, and it’s not quite clear whether you’d have, under their tests, a survivable theory of ancillary authority to regulate the behavior that Comcast was engaging in.

However, George is wrong on his second point, and that is, if you do move to reclassification, certainly the FCC would have the legal authority to draft regulations that would govern exactly the kind of behavior Comcast was engaging in. This is where George and others sometimes mistakenly create a straw man, intentionally or unintentionally. If the 2002 cable modem order — which began the process of calling Internet access services “information services” rather than telecommunications services — are revisited or reversed or modified in some way, it doesn’t mean you have to go back to the old-style regulatory approach that governed telecommunications services for so many decades. That’s a straw man, I think, that network operators and advocates on the other side like to use, and you hear the talking points that advocates on our side would like to return to old-style telephone regulations, burdensome regulations that would involve things like requiring tariffing, and wholesale provisioning of capacity for competing ISPs, and other things. It’s indeed not an either/or in that situation. Title II certainly gives, particularly under Sections 201 and 202, a model for dealing with the facilities-based access providers, for dealing with the Transport layer, and governing how the Transport layer deals with the content that flows over the Transport layer.

So I think I would strongly disagree with George’s statement that, if you reclassify, you would have to go back to things that were done prior to the reclassification of these services from telecom, to information services. If you go back to classifying them as telecom services, it doesn’t mean you have to create all those old-style telephone regulations again. It’s just not the case.

Next: If redeclaration is step #1, what’s step #2?…

SCOTT FULTON, Betanews: If you don’t have to revert to old-style, 1994 telephone regulations, what does one do instead? It seems like you’re implying that reclassification is a first step, and that there are several steps thereafter.

MARKHAM ERICKSON, Executive Director, Open Internet Coalition: Yes, I think there’s at least another step after that. First, you’d have to reclassify. You would then have to forebear from some of the Title II provisions that you wouldn’t want to see applied to today’s Internet access providers, that may have applied to old-style telephone carriers. And then you’d have to develop rules that would interpret the provisions in Title II, or use those sections of Title II, and maybe parts of Title I under ancillary authority, to address the things that are in the Broadband Plan, whether it’s network neutrality or USF reform, or the privacy issues, the truth in billing provisions, the things that [FCC] Chairman Julius Genachowski [Wednesday] in his Senate testimony talked about wanting to move forward on.

There’s not just one way to do it; there are several options ahead of them, and I don’t want to get into those yet, because we’re still working through those, and I’m sure that Chairman Genachowski’s team is working through those. But I think the talking points you’re seeing from George Ou are really setting up a non-existent straw man.

SCOTT FULTON: You already answered my question on Tuesday with regard to the role of Congress. Can the Commission by itself do these things that you are requesting of it, without the intervention or the oversight of Congress?

MARKHAM ERICKSON: Well, they’ll always have the oversight of Congress. The FCC, by law, is overseen by Congress, they have oversight hearings pretty regularly — [Wednesday] was one of those. But whether you need a Congressional enactment, a new piece of legislation, no, absolutely not. You don’t need that. The FCC, when they engaged in the first place to classify Internet access as information services in 2002, it [rendered] a declaratory ruling without any Congressional intervention. And they can simply revisit that decision on their own, and reverse that decision like they did…in 2002. Now, they have to do so in a well-reasoned way, with legal and factual arguments that would be able to survive review at the DC Circuit, or whatever circuit is looking at that. I think there are facts that are handed to them that they can utilize.

At the same time, if Congress is going to work out a piece of legislation concurrently, that can happen too. I think the point to remember is that Congress doesn’t do anything very quickly. The ’96 Act took roughly ten years from theory to final passage. So the choice really for all of us is, if the FCC had the legal authority to move forward on the National Broadband Plan, should they do that, or should they wait for Congress, which potentially takes ten years? I think the answer is, they should not wait ten years, that they should move forward, and in the meantime, work with Congress as Congress works on a piece of legislation too.

SCOTT FULTON: Without actually signing his name to any particular way of thinking, I noticed that Sen. Rockefeller [Wednesday] said that if it should become necessary for Congress to rewrite laws, and thereby help the Commission along…then he’s happy to start that process. Should Chairman Genachowski, in your mind, say, “Thanks, Jay, but no, thanks?”

MARKHAM ERICKSON: No, I think what you saw Chairman Rockefeller say is both. He said, they’re willing to stand at the ready to draft legislation if it’s necessary. But he also said that, in his opinion, the FCC has all the tools they need to move forward without Congress. So I think he was giving the Chairman the green light to move forward unilaterally without Congress, but saying, if you get stuck, he’ll stand ready to move legislation. I actually think that you could go forward concurrently.

SCOTT FULTON: You mentioned there were only 68 legislative session days before this term is out [67 as of Friday]. Ranking Member Kay Bailey Hutchison drew a line in the sand, warning the Chairman against trying a redeclaration, not necessarily saying what she’ll do as repercussion, but I’ll assume even though the Republican party may be in the super-minority today, it’s likely that after the next Congressional election it will not be…Since it takes years for Congress to get anything done, the Republicans could mount a very significant counter-offensive. We’ve heard the term “net neutrality” bandied about, lifting the spirits of the proponents of the Broadband Plan; I’m imagining how that will play against “big government.”

MARKHAM ERICKSON: The way I look at it, I think in some ways, the Comcast decision was interesting in that, under the concept of ancillary authority as proposed by the Commission — and the Comcast court pointed this out — it was hard to see what the limits of the FCC’s authority would be. If the FCC were to take a much more conservative approach, by narrowly reclassifying broadband access facilities as telecommunications services, you’re talking about a narrow segment of industry, and…we would expect to see a very light touch regulation even on those providers, just to accomplish the goals of the National Broadband Plan and network neutrality. For those who are worried about the FCC’s larger reach into other segments of the Internet, and other things, I think the Comcast decision sort of solves that issue for you. I think the reclassification issue is actually the smaller government, more narrow approach to handling issues like network neutrality.

SCOTT FULTON: Well, when you use “net neutrality” and “light touch” in the same sentence, there are a lot of people who would say it takes a lot more than a light touch — maybe more of a fiery touch — to be able to reach in and tell an Internet service provider, for example, you may not limit the use of an application on your network in a particular fashion, or you may not employ this type of network management technique. Inevitably, someone will call out interference.

MARKHAM ERICKSON: You know the rules, at least as proposed, would provide network operators an extraordinary amount of flexibility to manage their networks without any second-guessing, without any sort of blacklists or whitelists about things they may or may not do. And I think that’s the right approach. I want to see, and I think most stakeholders want to see, the ISPs be able to manage their networks without a lot of second-guessing and without having to look over their shoulders and secure their networks and deal with congestion, and again I think that tends to be a straw man that isn’t based on, at least, the way I read the rules and what we’re interested in seeing.

SCOTT FULTON: If the Commission goes forth with the plan as you see it, and tries reclassification, is there hope for the Commission being able to achieve getting back on that track before the current legislative session expires?

MARKHAM ERICKSON: Absolutely.

Copyright Betanews, Inc. 2010

![]()

The first volume licensing arrangements for Microsoft Office 2010 will be made through company partners on May 1, almost two weeks earlier than expected. This news today from the company’s Office Engineering team, which released the final build of all versions of the company’s principal applications suite today.

The first volume licensing arrangements for Microsoft Office 2010 will be made through company partners on May 1, almost two weeks earlier than expected. This news today from the company’s Office Engineering team, which released the final build of all versions of the company’s principal applications suite today.

MARKHAM ERICKSON, OIC: I think George is right with point #1, that the DC Circuit didn’t obliterate the concept of ancillary authority; that legal theory still exists. The court just further described what they think ancillary authority means. It means that anything you’re doing under Title I has to be tied to a specific statutory mandate under Titles II, III, or VI of the Communications Act; and that what the FCC was doing in the Comcast decision — relying primarily on Section 706 and 230 of the Communications Act — neither of those sections provided a statutory mandate, and they were mere policy statements rather than statutory mandates.

MARKHAM ERICKSON, OIC: I think George is right with point #1, that the DC Circuit didn’t obliterate the concept of ancillary authority; that legal theory still exists. The court just further described what they think ancillary authority means. It means that anything you’re doing under Title I has to be tied to a specific statutory mandate under Titles II, III, or VI of the Communications Act; and that what the FCC was doing in the Comcast decision — relying primarily on Section 706 and 230 of the Communications Act — neither of those sections provided a statutory mandate, and they were mere policy statements rather than statutory mandates.

![Adobe Flash Catalyst converts a multi-layered graphic from Photoshop into a workable Web app front panel. [Screenshot courtesy Adobe] Adobe Flash Catalyst converts a multi-layered graphic from Photoshop into a workable Web app front panel. [Screenshot courtesy Adobe]](http://images.betanews.com/media/4833.jpg)

![A sophisticated order management system appears within a Web browser framework (IE8) using Silverlight 4 and WCF RIA Services. [Screenshot courtesy Microsoft.] A sophisticated order management system appears within a Web browser framework (IE8) using Silverlight 4 and WCF RIA Services. [Screenshot courtesy Microsoft.]](http://images.betanews.com/media/4834.jpg)

A long-planned hearing on Capitol Hill to discuss the Federal Communications Commission’s Broadband Plan took on new meaning yesterday, a week after

A long-planned hearing on Capitol Hill to discuss the Federal Communications Commission’s Broadband Plan took on new meaning yesterday, a week after  What saved Intel’s neck during the worst part of the last economic downturn was the Atom processor, the heart of netbooks that started selling well as consumers’ budgets tightened. Now that the 2008-09 dip is over, and even businesses’ budget belts are loosening, the company’s attention returns to the server side of the equation.

What saved Intel’s neck during the worst part of the last economic downturn was the Atom processor, the heart of netbooks that started selling well as consumers’ budgets tightened. Now that the 2008-09 dip is over, and even businesses’ budget belts are loosening, the company’s attention returns to the server side of the equation. In the history of anything whatsoever, timing is rarely, if ever, coincidental. More often these days, however, the strategy behind it looks confusing. Just days before it’s scheduled to hold its developers conference in San Francisco (tomorrow and Thursday), Twitter revealed that it is in the process of either acquiring or building applications that will compete directly with the Twitter clients these developers will be taught how to build.

In the history of anything whatsoever, timing is rarely, if ever, coincidental. More often these days, however, the strategy behind it looks confusing. Just days before it’s scheduled to hold its developers conference in San Francisco (tomorrow and Thursday), Twitter revealed that it is in the process of either acquiring or building applications that will compete directly with the Twitter clients these developers will be taught how to build.

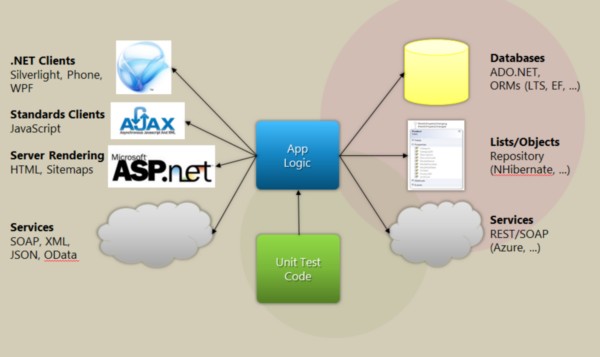

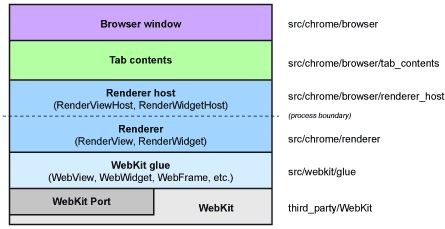

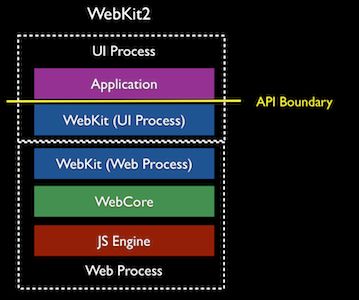

“Notice that there is now a process boundary, and it sits below the API boundary,” reads

“Notice that there is now a process boundary, and it sits below the API boundary,” reads  In a pair of blog posts since

In a pair of blog posts since  It was perhaps one of the most drawn-out, painful launches in Intel’s long history: the introduction last February of Tukwila, the latest generation of its Itanium 64-bit processor architecture. Not everyone in the Itanium Solutions Alliance hung on for the five-year ride, with Unisys having been its most prominent drop-out last year, citing competitor HP’s dominance in the field. Microsoft held on for the entire stretch; but last week, the company announced it would not lend its support to whatever the generation after Tukwila might become.

It was perhaps one of the most drawn-out, painful launches in Intel’s long history: the introduction last February of Tukwila, the latest generation of its Itanium 64-bit processor architecture. Not everyone in the Itanium Solutions Alliance hung on for the five-year ride, with Unisys having been its most prominent drop-out last year, citing competitor HP’s dominance in the field. Microsoft held on for the entire stretch; but last week, the company announced it would not lend its support to whatever the generation after Tukwila might become. “At the national level, establishing a public performance right in sound recordings and eliminating the exemption for terrestrial broadcasters follows principles of US copyright law,” wrote Kerry, the brother of the Senate Foreign Relations Committee Chairman and former presidential candidate. “In the words of the Supreme Court, ‘The encouragement of individual effort by personal gain is the best way to advance public welfare through the talents of authors and inventors…’ Consistent with this historic rationale for copyright, providing fair compensation to America’s performers and record companies through a broad public performance right in sound recordings is a matter of fundamental fairness to performers. It would also provide a level playing field for all broadcasters to compete in the current environment of rapid technological change, including the Internet, satellite, and terrestrial broadcasters. In today’s digital music marketplace, where US performers and record labels are facing both unprecedented challenges and opportunities, the Department believes that providing such incentives for America’s performing artists and recording companies is more important than ever.”

“At the national level, establishing a public performance right in sound recordings and eliminating the exemption for terrestrial broadcasters follows principles of US copyright law,” wrote Kerry, the brother of the Senate Foreign Relations Committee Chairman and former presidential candidate. “In the words of the Supreme Court, ‘The encouragement of individual effort by personal gain is the best way to advance public welfare through the talents of authors and inventors…’ Consistent with this historic rationale for copyright, providing fair compensation to America’s performers and record companies through a broad public performance right in sound recordings is a matter of fundamental fairness to performers. It would also provide a level playing field for all broadcasters to compete in the current environment of rapid technological change, including the Internet, satellite, and terrestrial broadcasters. In today’s digital music marketplace, where US performers and record labels are facing both unprecedented challenges and opportunities, the Department believes that providing such incentives for America’s performing artists and recording companies is more important than ever.”