Clemens Pfeiffer is the CTO of Power Assure and is a 25-year veteran of the software industry, where he has held leadership roles in process modeling and automation, software architecture and database design, and data center management and optimization technologies.

CLEMENS PFEIFFER

CLEMENS PFEIFFERPower Assure

About half of all service outages in data centers today are caused by power problems, and that percentage is expected to increase as the electric grid struggles to meet a growing demand on an aging infrastructure. Part of the reason for this shift is that hardware has become remarkably reliable, and the virtualization of servers, storage and network components, or the so called “Software-Defined Data Center,” has made applications immune to single points of failure. Power problems, by contrast, are only partially addressed by the uninterruptible power supply (UPS) and backup generator.

To enhance their business continuity and disaster recovery strategies, most organizations now operate multiple, geographically-dispersed data centers. While this investment is made primarily to protect against catastrophic events caused by major natural disasters, the arrangement can also afford greater immunity from power problems, whether caused by weather or disruptions on the grid.

What is Software-Defined Power?

Software-Defined Power is emerging as the solution to application-level reliability issues being caused by power problems. Software-Defined Power, like the broader Software-Defined Data Center (SDDC), is about creating a layer of abstraction that makes it easier to continuously match resources with changing needs. For SDDC, the resources are the servers, storage and networking equipment, and the need is application service levels. For Software-Defined Power, the resource is the electricity required to power (and cool) all of that equipment, but the need is exactly the same: application service levels.

With Software-Defined Power, overall reliability is improved by shifting the applications to the data center with the most dependable, available and cost-efficient power at any given time. Software-Defined Power is implemented using a software system capable of combining IT and facility/building management systems, and automating standard operating procedures, resulting in the holistic allocation of power within and across data centers, as required by the ongoing changes in application load.

It’s About the Applications

Once configured with the service level and other requirements for all applications, the Software-Defined Power solution continuously and automatically optimizes the resource allocations as it shifts loads between or among data centers. Adding power to the already existing software-defined computing, storage and network components of an application environment makes it possible to abstract applications fully from an individual data center and its power dependency. This is what enables the shifting and shedding of application capacity across multiple data centers by adjusting the IT equipment and critical facility infrastructure required at each, resulting in the maximum possible application-level reliability at the lowest operating cost.

Not only does shifting loads between data centers help increase reliability by affording greater immunity from power problems that cause unplanned downtime, it also creates wider windows for the planned downtime required for routine maintenance and upgrades within in each data center. This makes it easier to operate applications 24×7 with no adverse impact on either availability or performance from power-related issues.

Follow-the-Moon Strategies

In addition to the increased reliability, Software-Defined Power also pays for itself by minimizing energy spend and enabling participation in lucrative demand response programs. Power is the most dependable and available at night, which is also when rates for electricity are normally the lowest. So shifting the load to “follow the moon” can afford considerable savings.

Shifting load to a distant data center also enables shedding that load locally. A best practice in Software-Defined Power, therefore, is to power down the servers until they are needed again. This same ability to de- and re-active servers can also be used to dynamically match capacity to load within a single data center on a regular schedule or in response to changing application demand.

Because utilities pay exorbitant rates for wholesale energy during periods of peak demand, they are willing to pay commercial and industrial customers handsomely to reduce usage during these peaks. Software-Defined Power enables data centers to participate in these demand response programs without adversely impacting on application service levels. Organizations can even go one step further: By knowing about potential grid issues, IT and facility managers can take preventive action to shift applications to another data center in advance of any power problems.

The combination of paying less for energy and wasting less to power (and cool) idle servers (including during demand response events) can result in savings of over 50 percent. And considering that the operational expenditure for energy alone exceeds the capital expenditure for the average server today, the electric bill for a full rack of servers can be cut by as much as $25,000 every year.

Industry Perspectives is a content channel at Data Center Knowledge highlighting thought leadership in the data center arena. See our guidelines and submission process for information on participating. View previously published Industry Perspectives in our Knowledge Library.

It’s common knowledge that Google is closing its Google Reader service, and that July 1 deadline is creeping ever closer. Now is the perfect time to switch to an alternative service and become acclimatized to a slightly different way of working, and the good news is that you can make the switch in minutes without having to perform any convoluted tricks, thanks to

It’s common knowledge that Google is closing its Google Reader service, and that July 1 deadline is creeping ever closer. Now is the perfect time to switch to an alternative service and become acclimatized to a slightly different way of working, and the good news is that you can make the switch in minutes without having to perform any convoluted tricks, thanks to

Linux users are a strange bunch. As a distro gets popular, it tends to lose credibility with the Linux elitists. It is much like an underground rock band. As the band gains mainstream success, the original fans view the band as “sell-outs”. For instance, Ubuntu, the most popular Linux distro, is viewed negatively by many as a beginner distro (Linux users only feel this way because of its success — Ubuntu is a wonderful OS). Linux Mint however, is the exception to the rule — it is revered by newbies and elite users alike. This is despite its long-held top spot on

Linux users are a strange bunch. As a distro gets popular, it tends to lose credibility with the Linux elitists. It is much like an underground rock band. As the band gains mainstream success, the original fans view the band as “sell-outs”. For instance, Ubuntu, the most popular Linux distro, is viewed negatively by many as a beginner distro (Linux users only feel this way because of its success — Ubuntu is a wonderful OS). Linux Mint however, is the exception to the rule — it is revered by newbies and elite users alike. This is despite its long-held top spot on  Instead of Unity, Linux Mint generally offers two desktop environments — Cinnamon and Mate (other environments such as KDE are usually made available later). Both of these are forks of Gnome. Mate is a fork of Gnome 2 whereas Cinnamon is a fork of Gnome 3. To clarify, a “fork” is when someone alters an existing program’s source code. A program is typically forked when someone is dissatisfied with the program in its existing state. For the purpose of this article and test, I am using Cinnamon as it is more “modern” than Mate.

Instead of Unity, Linux Mint generally offers two desktop environments — Cinnamon and Mate (other environments such as KDE are usually made available later). Both of these are forks of Gnome. Mate is a fork of Gnome 2 whereas Cinnamon is a fork of Gnome 3. To clarify, a “fork” is when someone alters an existing program’s source code. A program is typically forked when someone is dissatisfied with the program in its existing state. For the purpose of this article and test, I am using Cinnamon as it is more “modern” than Mate. Also new in Mint is a new version of the Nemo file manager. To those that don’t know, Nemo is a forked version of Gnome’s “Files” program. I normally consider myself a purist when it comes to Gnome programs. However, Nemo greatly improves upon the original — it is now vastly superior to Files. The UI is far better including a new bar that sits under each drive and tells you how much space is left. Again, it’s the little things that matter sometimes.

Also new in Mint is a new version of the Nemo file manager. To those that don’t know, Nemo is a forked version of Gnome’s “Files” program. I normally consider myself a purist when it comes to Gnome programs. However, Nemo greatly improves upon the original — it is now vastly superior to Files. The UI is far better including a new bar that sits under each drive and tells you how much space is left. Again, it’s the little things that matter sometimes. On Thursday, South Korean manufacturer Samsung announced a new smartphone part of its upscale Android lineup, called Galaxy S4 Mini. The handset is marketed as a smaller variant of the company’s current green droid flagship, the Galaxy S4, but don’t expect any of the latter’s bells and whistles.

On Thursday, South Korean manufacturer Samsung announced a new smartphone part of its upscale Android lineup, called Galaxy S4 Mini. The handset is marketed as a smaller variant of the company’s current green droid flagship, the Galaxy S4, but don’t expect any of the latter’s bells and whistles. Software versioning has changed a great deal over the years. It used to be that version 1 of an application would be released and it would be followed in around a year’s time by version 2. You might well find that updates would be released in the interim — versions 1.1 and 1.2 for example — but it didn’t take long for things to start to get more complicated.

Software versioning has changed a great deal over the years. It used to be that version 1 of an application would be released and it would be followed in around a year’s time by version 2. You might well find that updates would be released in the interim — versions 1.1 and 1.2 for example — but it didn’t take long for things to start to get more complicated. In theory, a free online storage account sounds like it should be a great way to share files with others. And this can be true, at least sometimes, but there are complications. Like having to upload your data first, for instance. And then trusting its security to your service provider.

In theory, a free online storage account sounds like it should be a great way to share files with others. And this can be true, at least sometimes, but there are complications. Like having to upload your data first, for instance. And then trusting its security to your service provider.

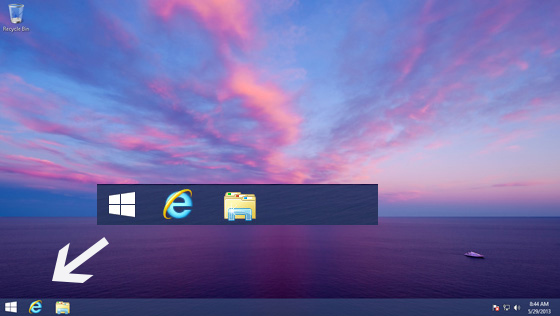

Ringo Starr admits he gets frustrated that all people ever want to talk to him about is The Beatles. The developers of Windows 8 must feel similarly annoyed that despite all the changes in the new OS, all anyone wants to talk about is the Start button.

Ringo Starr admits he gets frustrated that all people ever want to talk to him about is The Beatles. The developers of Windows 8 must feel similarly annoyed that despite all the changes in the new OS, all anyone wants to talk about is the Start button. But of course all anyone wants to talk about is the Start button, so let’s do that. Yesterday Microsoft blogger Paul Thurrott confirmed the return of the

But of course all anyone wants to talk about is the Start button, so let’s do that. Yesterday Microsoft blogger Paul Thurrott confirmed the return of the  However, the beauty of the Windows 7 Start menu is you can launch other programs, and access folders and settings without losing sight of any open windows. The Apps page is full screen, which will — understandably — annoy some people.

However, the beauty of the Windows 7 Start menu is you can launch other programs, and access folders and settings without losing sight of any open windows. The Apps page is full screen, which will — understandably — annoy some people.