By Scott M. Fulton, III, Betanews

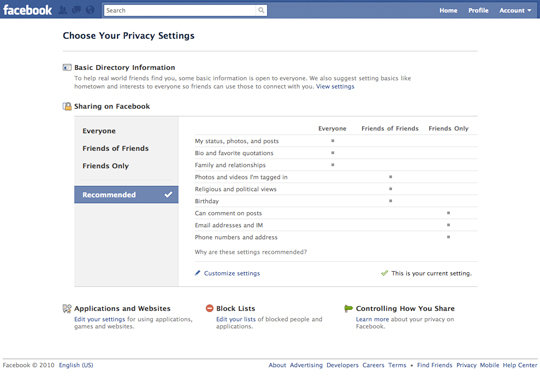

Yesterday, the Chairman of the European multi-national group of ministers overseeing online privacy policy enforcement, Jacob Kohnstamm of the Article 29 Working Party (WP29), sent letters to the CEOs of Google, Microsoft, and Yahoo, urging them to alter their personal data retention policies in keeping with new EU standards. Kohnstamm wants their search engines to destroy personal data after six months’ retention rather than nine, as is Google’s current policy; and he simultaneously urged European Commission Vice President Viviane Reding for help getting that message across.

In less than a day, Kohnstamm got his wish: This morning in Brussels, Comm. Reding placed a public call on the United States to forge an agreement that would enable the EC to sue the search engine leaders in US courts for failure to follow EU guidelines for data protection.

“The EU and US are both committed to the protection of personal data and privacy. However, they still have different approaches in protecting data, leading to some controversy in the past when negotiating information exchange agreements (such as the Terrorist Finance Tracking Programme, so-called SWIFT agreement, or Passenger Name Records),” reads this morning’s statement from Brussels. “The purpose of the agreement proposed by the Commission today is to address and overcome these differences. Today’s proposal would give the Commission a mandate to negotiate a new data protection agreement for personal data transferred to and processed by enforcement authorities in the EU and the US. It would also commit the Commission to keeping the European Parliament fully informed at all stages of the negotiations.”

In a taped address this morning, Comm. Reding characterized the agreement not only as essential for protecting citizens’ rights, but also as a necessary tool for both the US and EU in the war on terrorism.

“We all want to have control over our personal information. This is why the EU has rules on the protection of personal data,” Reding said. “Our rights are clear and must be respected. Whenever your personal information is collected, whenever it is processed, and whenever it is used, these are the high standards we must live up to…We are facing common security challenges from international crime and terrorism. We have been confronted with devastating attacks in Europe and in the United States. We are working hard with the US to confront these challenges.”

“We all want to have control over our personal information. This is why the EU has rules on the protection of personal data,” Reding said. “Our rights are clear and must be respected. Whenever your personal information is collected, whenever it is processed, and whenever it is used, these are the high standards we must live up to…We are facing common security challenges from international crime and terrorism. We have been confronted with devastating attacks in Europe and in the United States. We are working hard with the US to confront these challenges.”

Yesterday, the European Commission published the draft of a letter to the CEO of Google (PDF available here). It’s easy to determine it was a draft since Chairman Kohnstamm left blanks where he intended to look up and confirm the name “Eric Schmidt” (unless Schmidt actually did receive a letter where his own name was omitted in the salutation “Dear,”).

What Kohnstamm did not leave blank was his view of the pointlessness of Google’s so-called anonymization policy, which for Google is not actually a destruction of personally identifiable data but a partial erasure of it. The specific part being erased is the last octet of the IP address for the computer being tracked. Although in practice, IP addresses are not necessarily personally identifiable, EU policy treats IP addresses as personal since, in theory, they can be used in determining the identity of users. Kohnstamm told the unnamed Mr. Schmidt that it’s an academic matter for a database manager to align a partial IP address older than nine months with personal cookie data, which Google retains for 18 months.

“In its opinion, WP29 stressed the sensitivity of personal data related to search queries. I know that Google also shares this concern,” Kohnstamm wrote. “In response to the opinion, your company publicly announced you will ‘anonymize’ IP addresses in your server logs after 9 months. In practice, you have indicated that you will delete the last octet of the IP addresses held in the search query log files after a period of 9 months… deleting the last octet of the IP-addresses is insufficient to guarantee adequate anonymisation. Such a partial deletion does not prevent identifiability of data subjects. In addition to this, you state you retain cookies for a period of 18 months. This would allow for the correlation of individual search queries for a considerable length of time. It also appears to allow for easy retrieval of IP addresses, every time a user makes a new query within those 18 months. Therefore, WP29 cannot conclude your company complies with the European data protection directive.”

Kohnstamm wrote a similar letter to Microsoft CEO Steve Ballmer, whose name was also left blank from the draft published by the EC. In it, he commends Microsoft for promising to anonymize Bing’s personal data after six months, as the EC requested. However, he doesn’t believe that promise is being kept, since it was apparently contingent upon Google’s and Yahoo’s willingness to follow suit. He also pointed out that last-octet deletion is pointless as an anonymization tool, a message he also sent to (unnamed) Yahoo CEO Carol Bartz. Yahoo has pledged to anonymize personal data after three months.

In a separate letter to US Federal Trade Commission Chairman Jon Leibowitz yesterday (insert name, please), Kohnstamm called upon the FTC to investigate whether the three search engines’ failure to comply with EC directives constitutes “unfair or deceptive acts or practices in the marketplace” under Section 5 of the FTC Act. If it did — or if there was a reasonable theory that it did — such a finding could be the legal basis for charging Yahoo, Microsoft, and Google with fraud in US courts.

And if the agreement were written as Comm. Reding would prefer, according to the statement from Brussels this morning, it wouldn’t have to be the European Commission initiating the action. A European citizen could sue these parties in US courts for fraud and deception, if he believed his privacy was violated: “There would be an individual right of administrative and judicial redress regardless of nationality or place of residence.”

The US State Dept. has yet to issue a statement on the matter. Sec. of State Hillary Clinton is currently in Seoul, South Korea, in meetings with its president and foreign minister over the alleged sinking of a South Korean naval vessel by North Korea.

Copyright Betanews, Inc. 2010

Google – Microsoft – Yahoo – United States – European Commission

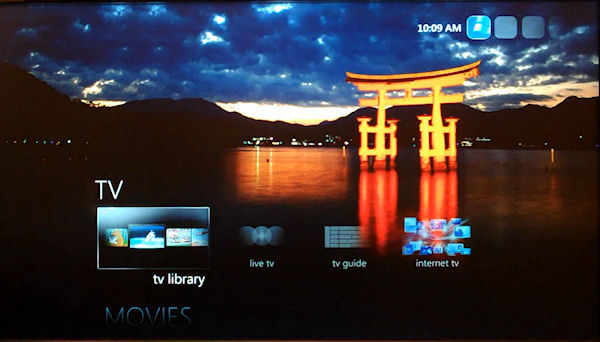

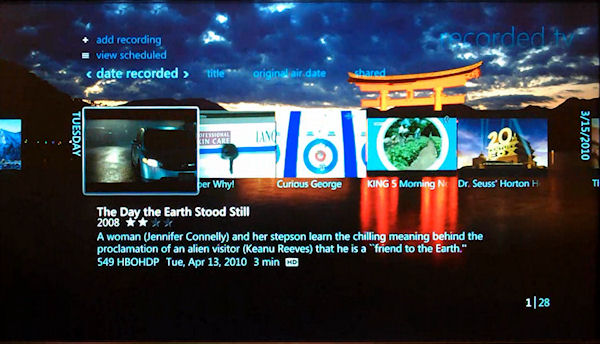

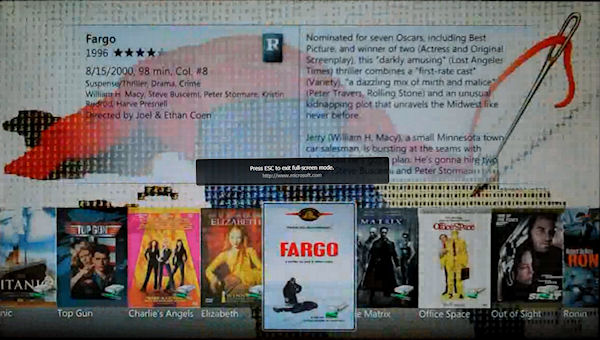

When a massive Microsoft corporate reorganization on September 20, 2005 vaulted Robbie Bach into the role of President of the Entertainment & Devices division, the explanation at the time was to enable the company to focus on devices where the goal was to promote devices, and on platforms where the goal was to promote devices. Xbox was a device, whatever MP3 player the company would decide to produce was a device, and obviously cell phones are devices should Microsoft ever choose to enter that business in earnest.

When a massive Microsoft corporate reorganization on September 20, 2005 vaulted Robbie Bach into the role of President of the Entertainment & Devices division, the explanation at the time was to enable the company to focus on devices where the goal was to promote devices, and on platforms where the goal was to promote devices. Xbox was a device, whatever MP3 player the company would decide to produce was a device, and obviously cell phones are devices should Microsoft ever choose to enter that business in earnest. It’s a development that indicates that Microsoft realizes the importance of these assets as platforms, rather than as mere devices — a realization made feasible by the work of Bach and Allard. And yet off they go to parts unknown.

It’s a development that indicates that Microsoft realizes the importance of these assets as platforms, rather than as mere devices — a realization made feasible by the work of Bach and Allard. And yet off they go to parts unknown.

The latest hybrid notebook storage device announced today by Seagate Technology, the

The latest hybrid notebook storage device announced today by Seagate Technology, the ![Seagate's cross-section depiction of its new Momentus XT hybrid SSD/HDD. [Courtesy Seagate] Seagate's cross-section depiction of its new Momentus XT hybrid SSD/HDD. [Courtesy Seagate]](http://images.betanews.com/media/5024.jpg) Fast-forward to today, when Seagate product marketing manager Joni Clark triumphantly announces to Betanews that Momentus XT will not be bound to ReadyDrive at all.

Fast-forward to today, when Seagate product marketing manager Joni Clark triumphantly announces to Betanews that Momentus XT will not be bound to ReadyDrive at all.![A slide from a Seagate presentation revealing the benchmark test results for 'real-world' applications with its Momentus XT hybrid SSD/HDD drive. [Courtesy Seagate] A slide from a Seagate presentation revealing the benchmark test results for 'real-world' applications with its Momentus XT hybrid SSD/HDD drive. [Courtesy Seagate]](http://images.betanews.com/media/5025.jpg)

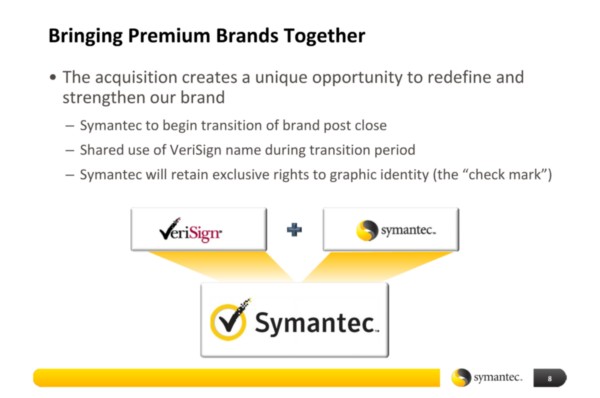

On the surface, it might sound like one of those amateurish conclusions a blogger might reach after having just read the press release: Symantec, a software company now mainly known for security products, acquires some assets from a non-competitor in order to get that company’s logo. But in the deal between Symantec and VeriSign announced yesterday, there is no mistaking the fact that the antivirus products maker acquired, among other things, the single asset that just last week VeriSign argued was the ticket to its own future stability: quite literally, its own logo.

On the surface, it might sound like one of those amateurish conclusions a blogger might reach after having just read the press release: Symantec, a software company now mainly known for security products, acquires some assets from a non-competitor in order to get that company’s logo. But in the deal between Symantec and VeriSign announced yesterday, there is no mistaking the fact that the antivirus products maker acquired, among other things, the single asset that just last week VeriSign argued was the ticket to its own future stability: quite literally, its own logo.

There are a handful of issues of contention that broadcasters (who transmit content over the public airwaves) have with the Federal Communications Commission’s Broadband Plan. One such outstanding dispute concerns the FCC’s proposed reallocation of unused digital spectrum from broadcast to broadband purposes — a way to get at least some of the estimated 180 MHz of spectrum wireless operators say they need, without another complete re-auction.

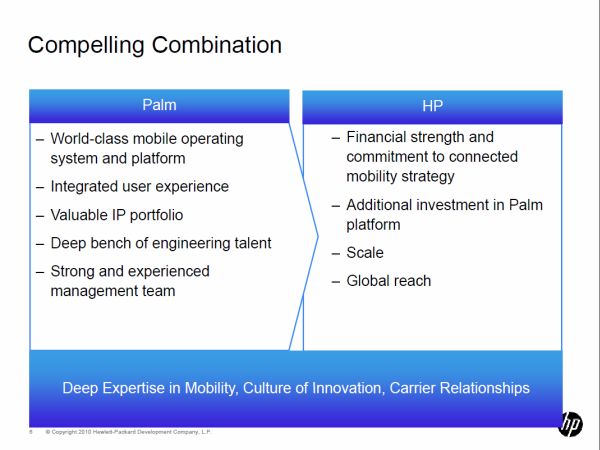

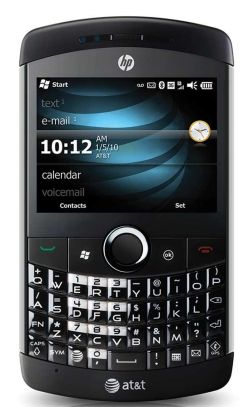

There are a handful of issues of contention that broadcasters (who transmit content over the public airwaves) have with the Federal Communications Commission’s Broadband Plan. One such outstanding dispute concerns the FCC’s proposed reallocation of unused digital spectrum from broadcast to broadband purposes — a way to get at least some of the estimated 180 MHz of spectrum wireless operators say they need, without another complete re-auction. But waving in front of HP’s obvious red flags were several more obvious white ones:

But waving in front of HP’s obvious red flags were several more obvious white ones: Indeed, last week, Rubin was talking with us about the synergies that could

Indeed, last week, Rubin was talking with us about the synergies that could