Let’s face it — secure online authentication is a chore. Except for a couple of people who enjoy using very complex passwords and/or a password manager, most of us find it difficult to use a secure combination of characters for each and every website where we have an account. Two-factor authentication is also not all that comfortable to manage, requiring use of a secondary means of generating a secure code. Often that’s a token given by the bank, a text message sent by the service provider, or an app.

Let’s face it — secure online authentication is a chore. Except for a couple of people who enjoy using very complex passwords and/or a password manager, most of us find it difficult to use a secure combination of characters for each and every website where we have an account. Two-factor authentication is also not all that comfortable to manage, requiring use of a secondary means of generating a secure code. Often that’s a token given by the bank, a text message sent by the service provider, or an app.

Is that modern? Well, it depends on your definition of the word modern. I consider the online authentication today to merely be just a slight evolution from the methods which we have used in the last decade. That’s not to say that is a bad thing, but certainly not where visionary pictures, videos or predictions from not too long ago would have us today. We’re not using flying cars, that’s for sure, nor some wonder authentication method for that matter.

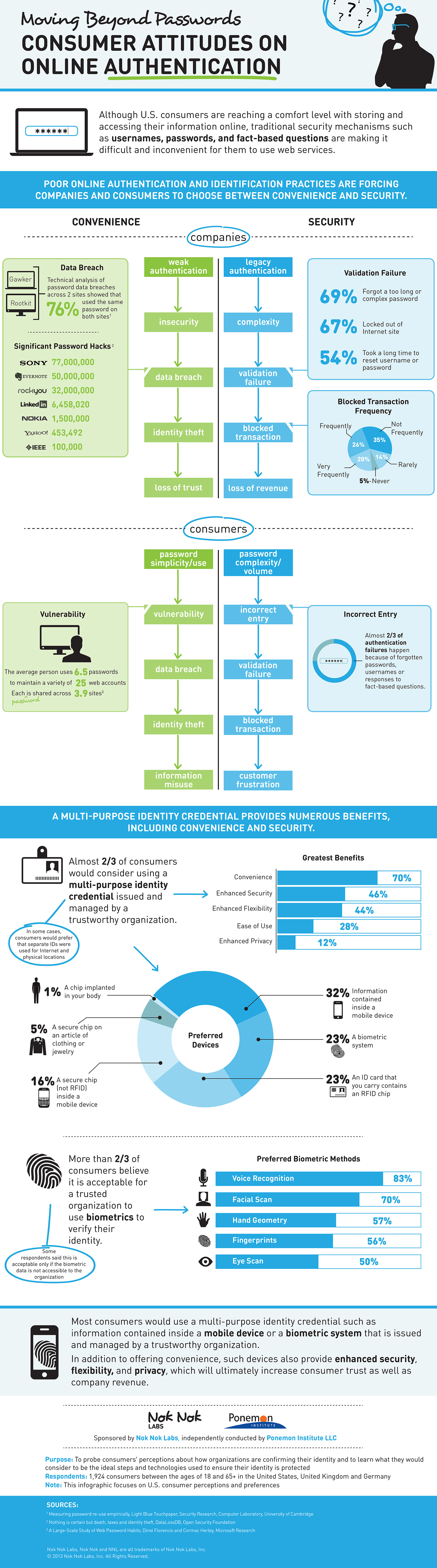

According to a study carried out by the Ponemon Institute, which surveyed 1,924 consumers in Germany, United Kingdom and United States, two thirds of the respondents would consider using a “multi-purpose identity credential” that is issued and managed by a trustworthy organization in order to authenticate various services.

The highest number of respondents, 32 percent that is, answered that they would prefer using “information contained inside a mobile device”. A biometric system and an ID card with an RFID chip follow, both favored by 23 percent of the respondents. The least popular method is, wait for it, a chip implanted in one’s body. Unsurprisingly, the last one got a mere 1 percent of the respondents behind it.

Judging by the other data provided by the study, the answers above shouldn’t come as a big surprise. Only five percent of the respondents haven’t experienced authentication failures during online transactions, while 26 percent, 29 percent and 34 percent of respondents from the US, UK and Germany, respectively have experienced this issue frequently.

Furthermore, 54 percent, 53 percent and 47 percent of the respondents from the US, UK and Germany, respectively, have said that it takes too long to reset a username or password. The same numbers, and higher, also apply to forgetting too complex or too long passwords and getting locked out of websites due to authentication failures.

What is interesting though is that consumers don’t want the easy way out either. According to the study, 46 percent, 45 percent and 65 percent of the respondents from the US, UK and Germany have trouble trusting systems or websites that rely on passwords as the sole means of authentication.

Slightly lower numbers, 38 percent, 37 percent and 46 percent for folks surveyed display a similar distrust towards systems or websites that do not ask the user to change the password on a frequent basis.

After all is said and done, security is still a very serious problem with potential risks that shouldn’t be taken lightly. From a personal point of view I find it rather difficult to believe that enough consumers are actively searching for various ways in which they can protect their online accounts or other important ones.

It’s in our nature, as tech-oriented folks, to be aware of the dangers lurking at every corner but for the most part I think the number of people who do something about it, where it matters, is quite low overall. For this reason I believe that new means of authentication and new devices designed to keep our online endeavors safe will not be adopted easily.

Infographic Credit: Nok Nok Labs

MATTHEW GOULDING

MATTHEW GOULDING