Michael Dell and his investment firm are ponying up $750 million in cash toward the $24.4 billion purchase of Dell Inc. to help bankroll the largest private equity-backed buyout since the financial crisis, Reuters wrote. The Dell founder and CEO this week struck a deal to take private the company he created out of a college dorm room in 1984, partnering with private equity house Silver Lake and Microsoft Corp. Michael Dell will contribute $500 million of his own cash, and MSDC Management – an affiliate of his investment vehicle, MSD Capital – will contribute another $250 million, according to a company filing on Wednesday.

(Reuters) – Michael Dell and his investment firm are ponying up $750 million in cash toward the $24.4 billion purchase of Dell Inc to help bankroll the largest private equity-backed buyout since the financial crisis.

The Dell founder and CEO this week struck a deal to take private the company he created out of a college dorm room in 1984, partnering with private equity house Silver Lake and Microsoft Corp.

Michael Dell will contribute $500 million of his own cash, and MSDC Management – an affiliate of his investment vehicle, MSD Capital – will contribute another $250 million, according to a company filing on Wednesday.

Dell Inc also said it is targeting the repatriation of $7.4 billion of cash now parked abroad to help finance the deal. That may dismay some shareholders, as a hefty tax is usually levied on cash brought back from overseas.

The deal, which ends Dell’s rocky 24-year run on the Nasdaq just as the once-dominant PC maker struggles to revive growth, is contingent on approval by a majority of shareholders — excluding Michael Dell himself.

Several shareholders, including prominent investor Frederick “Shad” Rowe of Greenbrier Partners, have spoken out against the deal, protesting a lack of specifics as well as a potential conflict of interest with Michael Dell being the company’s single largest shareholder with a roughly 16 percent stake.

“Some shareholders are glad. But there are others who feel it’s a raw deal,” said Shaw Wu, an analyst with Sterne Agee, who has spoken with several Dell shareholders since the announcement but declined to provide further details.

AND SO IT BEGINS

Dell was regarded as a model of innovation as recently as the early 2000s, pioneering online ordering of custom PCs and working closely with Asian suppliers and manufacturers to assure rock-bottom production costs. But it missed the big industry shift to tablet computers, smartphones and high-powered consumer electronics such as music players and gaming consoles.

Executives said on Tuesday the company will stick to a strategy of expanding its software and services offerings for large companies, with the goal of becoming a provider of corporate computing services – like the highly profitable IBM . They played down speculation the company may spin off the low-margin PC business on which it made its name.

The company has not given many specifics on what it would do differently as a private entity, angering some shareholders who said they needed more information to determine whether the $13.65-a-share deal price – a 25 percent premium to Dell’s stock price before buyout talks leaked in January – was adequate.

On Wednesday, an individual shareholder filed the first lawsuit, in Delaware, attempting to stop the buyout. The lawsuit – which is seeking class-action status – maintains that the $13.65 per share offered sharply underestimated the company’s long-term prospects.

“By engaging in the going private transaction now – in the midst of the company’s transition from a PC vendor to full service software and enterprise solution provider – the board is allowing defendants M. Dell and Silver Lake to obtain Dell on the cheap,” read the lawsuit filed by Catherine Christner.

Dell, the world’s No. 3 personal computer maker, broke down details of the equity and debt financing secured for the buyout in Wednesday’s filing.

Silver Lake is putting up $1.4 billion, while banks including Bank of America, Barclays, Credit Suisse and RBC will provide roughly $16 billion in term loans and other forms of financing.

Wednesday’s filing also disclosed that under certain circumstances if the merger cannot be completed, Michael Dell and Silver Lake could have to pay a termination fee of up to $750 million to the company.

The post Reuters – Michael Dell Ponies Up $750M in Cash for Deal appeared first on peHUB.

Microsoft’s efforts to downplay Google’s Gmail over its own Outlook.com service are well known amongst the tech crowd. In late-November the Redmond, Wash.-based corporation claimed that

Microsoft’s efforts to downplay Google’s Gmail over its own Outlook.com service are well known amongst the tech crowd. In late-November the Redmond, Wash.-based corporation claimed that

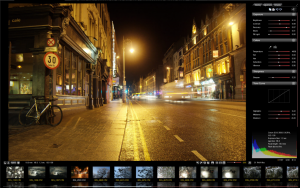

Take a photo with most digital cameras and by default you’ll get a JPG file, which is great for compatibility purposes, but does involve some compromises in image quality. And that’s because your picture will go through various processes before the final JPG is produced — sharpening, adjusting colors and contrast, compressing the results — and each step results in the loss of some information.

Take a photo with most digital cameras and by default you’ll get a JPG file, which is great for compatibility purposes, but does involve some compromises in image quality. And that’s because your picture will go through various processes before the final JPG is produced — sharpening, adjusting colors and contrast, compressing the results — and each step results in the loss of some information. Life gets more interesting when you click the Adjustments tab, though, where Scarab Darkroom provides tweaks for Exposure (Brightness, Contrast, Recovery, Blacks, Fill Light), Colors (Temperature, Tint, Hue, Saturation, Vibrance), Tone Curve (Highlights, Midtones, Shadows) and Sharpness. Drag a particular slider and the picture will update accordingly, giving you immediate feedback. And it’s easy to copy your settings to the clipboard, and restore them later, so once you’ve found a configuration which delivers good results then you can quickly apply it to all your other shots.

Life gets more interesting when you click the Adjustments tab, though, where Scarab Darkroom provides tweaks for Exposure (Brightness, Contrast, Recovery, Blacks, Fill Light), Colors (Temperature, Tint, Hue, Saturation, Vibrance), Tone Curve (Highlights, Midtones, Shadows) and Sharpness. Drag a particular slider and the picture will update accordingly, giving you immediate feedback. And it’s easy to copy your settings to the clipboard, and restore them later, so once you’ve found a configuration which delivers good results then you can quickly apply it to all your other shots. Popular open-source image editor

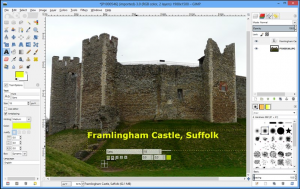

Popular open-source image editor  Platform-specific improvements concentrate on the OS X platform — the gimpdir has been moved to the user’s Library\Application Support folder, while the system screenshot tool is now used when creating a new image from a screenshot. Plug-in windows should now automatically appear on top, and users can now select their chosen language via GIMP’s Preferences dialog.

Platform-specific improvements concentrate on the OS X platform — the gimpdir has been moved to the user’s Library\Application Support folder, while the system screenshot tool is now used when creating a new image from a screenshot. Plug-in windows should now automatically appear on top, and users can now select their chosen language via GIMP’s Preferences dialog. According to Symantec, businesses are increasingly at risk of insider IP theft, with staff moving, sharing and exposing sensitive data on a daily basis and, worse still, taking confidential information with them when they change employers.

According to Symantec, businesses are increasingly at risk of insider IP theft, with staff moving, sharing and exposing sensitive data on a daily basis and, worse still, taking confidential information with them when they change employers.

Three days ago evad3rs

Three days ago evad3rs